Testing Mind Map Series: How to Think Like a CRO Pro (Part 78)

Interview with Greg Wendel

There’s the experimentation everyone talks about. And then there’s how it actually happens.

We’re hunting for signals in the noise to bring you conversations with people who live in the data. The ones who obsess over test design and know how to get buy-in when the numbers aren’t pretty.

They’ve built systems that scale. Weathered the failed tests. Convinced the unconvincible stakeholders.

And now they’re here: opening up their playbooks and sharing the good stuff.

This week, we’re chatting with Greg Wendel, Co-founder at Drumline Digital.

Greg, tell us about yourself. What inspired you to get into testing & optimization?

I can remember moments early in my career when I worked in more traditional marketing roles, I’d get frustrated at seeing team members argue over things like which image to use or which corner to stick a discount code on a mailout voucher, all without any real strategy to measure the results. I didn’t have the words or the tools yet to understand exactly how to make better decisions, but I knew what I was seeing couldn’t be it.

I was already pretty set on building the skills to move into the digital space after that role and started to come across running experiments as part of self-guided learning but it didn’t really take off until I landed with an agency that had a small CRO practice as part of their services where I fortunately had a couple of mentors who encouraged me to dive in.

From there, I was hooked. Not only did it mean I finally had answers to those early-career frustrations, but for anyone who loves to learn new things, it’s such an exciting space. Running a great testing program requires so many skillsets, there’s always something new to pick up, which was a hook for me back then and continues to be today.

How many years have you been testing for?

It’s been around 8 years now, which feels like a long time and a short time all at once! I’ve been lucky enough to work with teams of all shapes, sizes, and industries in that time, and it’s been a great way to see how testing fits into different types of organisations.

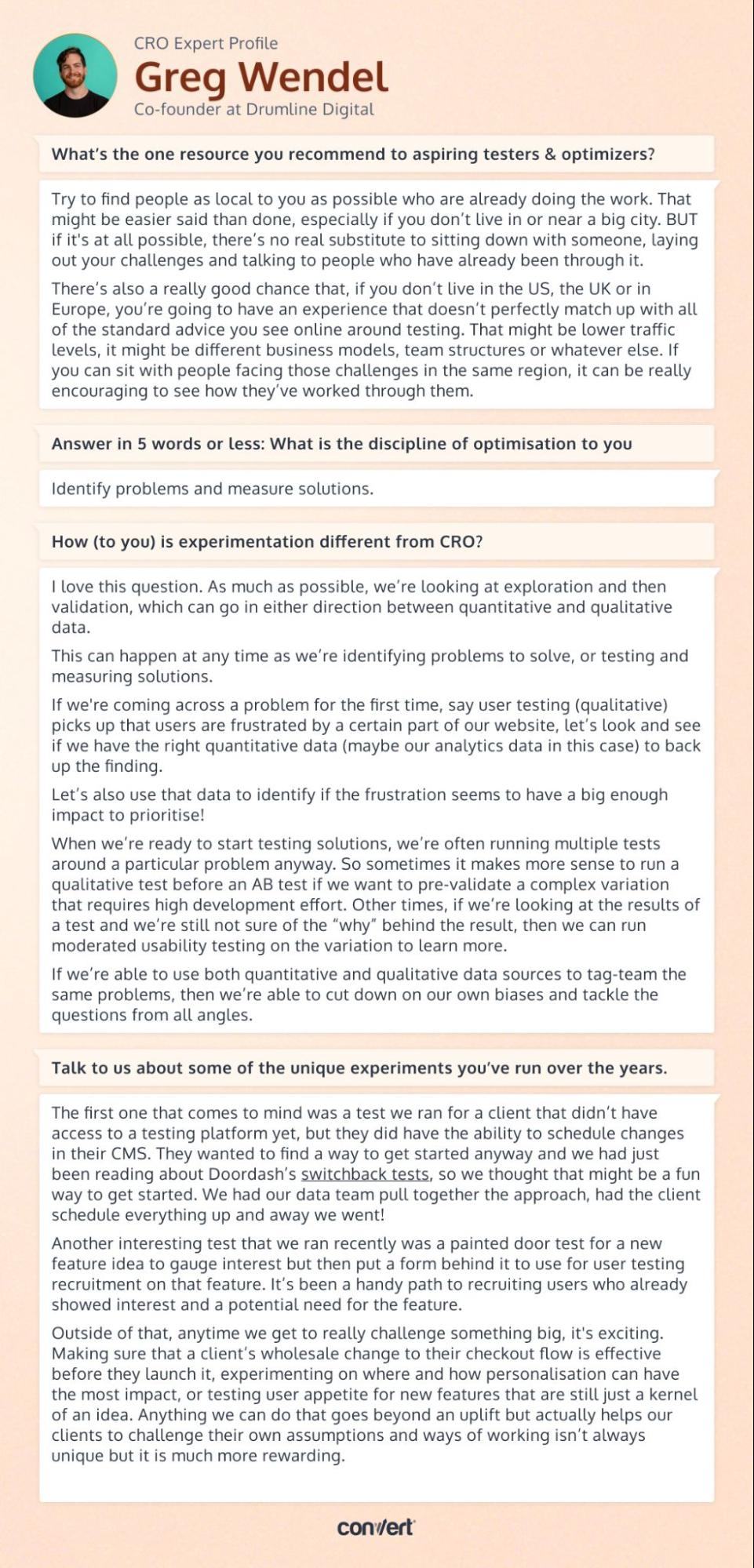

What’s the one resource you recommend to aspiring testers & optimizers?

Try to find people as local to you as possible who are already doing the work. That might be easier said than done, especially if you don’t live in or near a big city. BUT if it’s at all possible, there’s no real substitute to sitting down with someone, laying out your challenges and talking to people who have already been through it.

There’s also a really good chance that, if you don’t live in the US, the UK or in Europe, you’re going to have an experience that doesn’t perfectly match up with all of the standard advice you see online around testing. That might be lower traffic levels, it might be different business models, team structures or whatever else. If you can sit with people facing those challenges in the same region, it can be really encouraging to see how they’ve worked through them.

Answer in 5 words or less: What is the discipline of optimization to you?

Identify problems and measure solutions

What are the top 3 things people MUST understand before they start optimizing?

I don’t know if this is exactly in the spirit of the question, but more than anything, I think getting into the right mindset is the most important starting point, so that’s where I’d focus my advice!

- Tie tests to a product or feature roadmap as much as possible. If tests aren’t tied to an organisation’s key delivery priorities, there’s a huge risk of optimisation being something that gets done on the side when there’s a little extra time. And how many of us actually have a lot of extra time?

Of course, you’re going to have lots of great ideas that don’t neatly tie to something you’ve got planned in the backlog, so not every test needs to have a clean line drawn to your roadmap. Having that link is going to help you ensure you’re delivering on key metrics that other teams really care about, especially if you’re operating in a dedicated testing role.

Plus, testing on ideas that are already in your roadmap also means you have real weight when you say, “This test saved us $X million dollars”!

- Customer needs and business needs are 1A and 1B in your testing program. It sounds so obvious that a business thrives when delivering value for their customers, but it’s incredibly easy to get lost in a mindset of “I have to hit a number this quarter” and lose the focus on customer needs that drive the numbers long term.

Conversely, it’s also easy to get stuck solving problems customers raise in user testing that don’t move the needle on what is important to an organisation. Balancing both perspectives is key.

- Recognise when you’re getting stuck on the little details and missing the big picture. We all get stuck on details occasionally, it’s just going to happen at some point. There are so many skillsets that go into great testing and so many little micro-processes that can be set up to move a test from idea to production and measurement, it’s easy to get lost in the minutiae.

When that happens, just remind yourself that, at the end of the day, you’re trying to make better informed decisions. As long as you’re doing that consistently, you’re heading in the right direction.

How do you treat qualitative & quantitative data to minimize bias?

I love this question. As much as possible, we’re looking at exploration and then validation, which can go in either direction between quantitative and qualitative data.

This can happen at any time as we’re identifying problems to solve, or testing and measuring solutions.

If we’re coming across a problem for the first time, say user testing (qualitative) picks up that users are frustrated by a certain part of our website, let’s look and see if we have the right quantitative data (maybe our analytics data in this case) to back up the finding.

Let’s also use that data to identify if the frustration seems to have a big enough impact to prioritise!

When we’re ready to start testing solutions, we’re often running multiple tests around a particular problem anyway. So sometimes it makes more sense to run a qualitative test before an AB test if we want to pre-validate a complex variation that requires high development effort. Other times, if we’re looking at the results of a test and we’re still not sure of the “why” behind the result, then we can run moderated usability testing on the variation to learn more.

If we’re able to use both quantitative and qualitative data sources to tag-team the same problems, then we’re able to cut down on our own biases and tackle the questions from all angles.

How (to you) is experimentation different from CRO?

I tend to talk about experimentation being a big, all-encompassing umbrella. As long as there is an identified problem, a potential solution, and a way to measure the impact of that solution, then that is very likely a type of experiment. Not always a rigorous one, but hey, still an experiment. That includes AB testing, qualitatively testing new designs, or feature flags, testing personalised experiences, you name it.

I see CRO as a subset of that, utilising many of the same kinds of methodologies (problem, solution, measure) and applying it with a very specific focus in mind – increasing conversions on a website.

I will say it’s not an opinion I hold super strongly. At the end of the day, it’s really just semantics and the term people use is often based on their background. Folks in growth or ecommerce teams are often likely to be introduced via “CRO” as a term and I’ve seen people explain “CRO” exactly as I see “experimentation”.

So until I hear someone explain otherwise, I just assume we mean the same things!

Talk to us about some of the unique experiments you’ve run over the years?

The first one that comes to mind was a test we ran for a client that didn’t have access to a testing platform yet, but they did have the ability to schedule changes in their CMS. They wanted to find a way to get started anyway and we had just been reading about Doordash’s switchback tests, so we thought that might be a fun way to get started. We had our data team pull together the approach, had the client schedule everything up and away we went!

Another interesting test that we ran recently was a painted door test for a new feature idea to gauge interest but then put a form behind it to use for user testing recruitment on that feature. It’s been a handy path to recruiting users who already showed interest and a potential need for the feature.

Outside of that, anytime we get to really challenge something big, it’s exciting. Making sure that a client’s wholesale change to their checkout flow is effective before they launch it, experimenting on where and how personalisation can have the most impact, or testing user appetite for new features that are still just a kernel of an idea. Anything we can do that goes beyond an uplift but actually helps our clients to challenge their own assumptions and ways of working isn’t always unique but it is much more rewarding.

What’s your spicy take on AI?

I’m not sure it’s a spicy take but the people getting the most out of AI right now are the senior practitioners – we can apply that to product, marketing, ecomm, dedicated optimisation teams, whatever it is.

The people who have been on the tools, building their team processes and workflows, they’re the people who know where the sticky points are and, most importantly, they know how to validate what good outputs look like because they’ve had to create those outputs themselves. If someone doesn’t know what a good output should look like, how can they possibly be expected to get the right outputs from an AI tool?

Ok, maybe I can spice it up just a little bit then. If an organisation isn’t putting their senior practitioners who are still on the tools or have been in the past couple of years at the forefront to set the strategy and the way they want to use AI, then they shouldn’t be using AI yet.

Cheers for reading! If you’ve caught the CRO bug… you’re in good company here. Be sure to check back often, we have fresh interviews dropping twice a month.

And if you’re in the mood for a binge read, have a gander at our earlier interviews with Gursimran Gujral, Haley Carpenter, Rishi Rawat, Sina Fak, Eden Bidani, Jakub Linowski, Shiva Manjunath, Deborah O’Malley, Andra Baragan, Rich Page, Ruben de Boer, Abi Hough, Alex Birkett, John Ostrowski, Ryan Levander, Ryan Thomas, Bhavik Patel, Siobhan Solberg, Tim Mehta, Rommil Santiago, Steph Le Prevost, Nils Koppelmann, Danielle Schwolow, Kevin Szpak, Marianne Stjernvall, Christoph Böcker, Max Bradley, Samuel Hess, Riccardo Vandra, Lukas Petrauskas, Gabriela Florea, Sean Clanchy, Ryan Webb, Tracy Laranjo, Lucia van den Brink, LeAnn Reyes, Lucrezia Platé, Daniel Jones, May Chin, Kyle Hearnshaw, Gerda Vogt-Thomas, Melanie Kyrklund, Sahil Patel, Lucas Vos, David Sanchez del Real, Oliver Kenyon, David Stepien, Maria Luiza de Lange, Callum Dreniw, Shirley Lee, Rúben Marinheiro, Lorik Mullaademi, Sergio Simarro Villalba, Georgiana Hunter-Cozens, Asmir Muminovic, Edd Saunders, Marc Uitterhoeve, Zander Aycock, Eduardo Marconi Pinheiro Lima, Linda Bustos, Marouscha Dorenbos, Cristina Molina, Tim Donets, Jarrah Hemmant, Cristina Giorgetti, Tom van den Berg, Tyler Hudson, Oliver West, Brian Poe, Carlos Trujillo, Eddie Aguilar, Matt Tilling, Jake Sapirstein, Nils Stotz, Hannah Davis, Jon Crowder, and Mike Fawcett.

Written By

Greg Wendel

Edited By

Carmen Apostu