Testing Mind Map Series: How to Think Like a CRO Pro (Part 7)

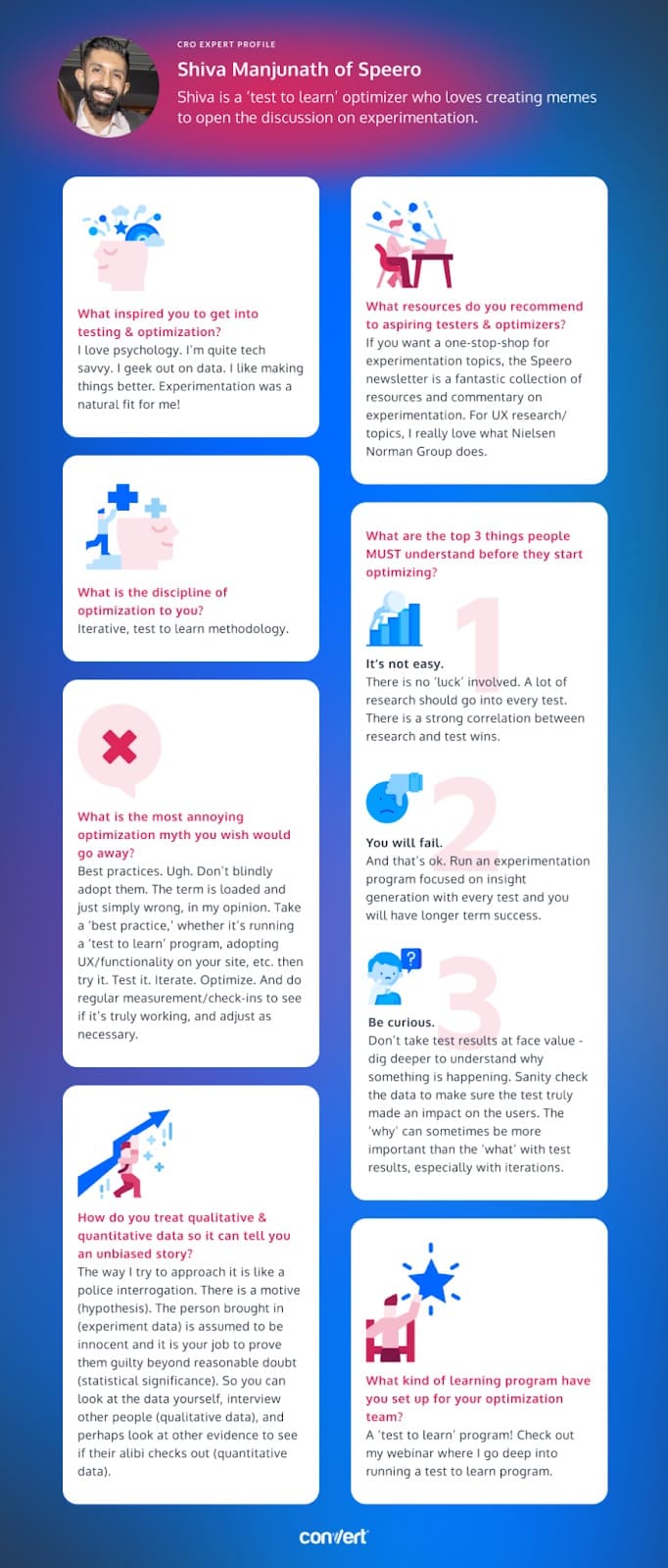

Interview with Shiva Manjunath of Speero

You’ve probably heard of the famed Sherlock Holmes, but did you know he had a rival in crime solving (CRO)?

Shiva Manjunath is his name and he does not get intimidated by rascals (experiment data). He starts with motives (hypotheses) to prove rogues guilty beyond reasonable doubt (statistical significance).

There will be no crimes against your business model committed on his watch!

Let’s learn more about his method…

Shiva, tell us about yourself. What inspired you to get into testing & optimization?

Would you believe that I was preparing to become a doctor in college? I was pre-med, but after graduating I realized I absolutely hated medicine. Kudos and much respect to all the doctors and nurses on the front line, but it’s just not for me.

After realizing I also don’t have any of the required skills to play hockey professionally, that led me to having a moment of time thinking long and hard about what I truly wanted to do. I love psychology. I’m quite tech savvy. I geek out on data. I like making things better. Experimentation was a natural fit for me!

How many years have you been optimizing for? What’s the one resource you recommend to aspiring testers & optimizers?

I’ve been involved in digital marketing for 8 years, but having truly hands-on experience running optimization programs, it’s been about 5+ years. But really, you asked how many years I have been ‘optimizing’ for, and that’s 29 years 😉 I’ve found that I’ve been actually optimizing lots of little things in my life (from my schedule, my diet, even my gaming has lots of optimization so I can get better).

In terms of resources, I’d say there are a few categories. Definitely get a good mixture of UX research, experimentation updates, and general program management topics. If you want a one-stop-shop for experimentation topics, the Speero newsletter (the latest edition was written by yours truly) is a fantastic collection of resources and commentary on experimentation. In terms of UX research/topics, I really love what Nielsen Norman Group does.

Answer in 5 words or less: What is the discipline of optimization to you?

Iterative, test to learn methodology

What are the top 3 things people MUST understand before they start optimizing?

- It’s not easy. There is no ‘luck’ involved. A lot of research should go into every test. There is a strong correlation between research and test wins. The foundation of optimization is research – don’t skimp on research.

- You will fail. And that’s ok. Run an experimentation program focused on insight generation with every test and you will have longer term success.

- Be curious. Don’t take test results at face value – dig deeper to understand why something is happening. Sanity check the data to make sure the test truly made an impact on the users. The ‘why’ can sometimes be more important than the ‘what’ with test results, especially with iterations.

How do you treat qualitative & quantitative data so it can tell you an unbiased story?

Ah, this is interesting. The way I try to approach it is like a police interrogation. There is a motive (hypothesis), but you can’t assume the person you brought in for questioning is innocent or guilty. The person brought in (experiment data) is assumed to be innocent, and it is your job to prove them guilty beyond reasonable doubt (statistical significance). So you can look at the data yourself, interview other people (qualitative data), and perhaps look at bank statements or look at the logs of when someone clocked in/out for work to see if their alibi checks out (quantitative data).

Maybe not the best example, but you have to always approach it objectively. And corroborate data sources (e.g. heatmaps with polls on the site with quantitative data) to come up with a story, and see if that supports, or doesn’t support, the hypothesis. With statistical rigor, obviously!

What kind of learning program have you set up for your optimization team? And why did you take this specific approach?

A ‘test to learn’ program! Check out my webinar where I go deep into running a test to learn program. I don’t want to spoil anything, but I use lots of visualizations and examples on how best to do this in my webinar, and why I do it there!

What is the most annoying optimization myth you wish would go away?

Best practices. Ugh. Don’t blindly adopt them. The term is loaded and just simply wrong, in my opinion. ‘Best Practices’ tend to be things that have worked for a lot of people, so you should try them. And I’m never a proponent of NOT trying something. But blindly adopting a ‘best practice’ can yield some serious issues. Take a ‘best practice,’ whether it’s running a ‘test to learn’ program, adopting UX/functionality on your site, etc. then try it. Test it. Iterate. Optimize. And do regular measurement/check-ins to see if it’s truly working, and adjust as necessary.

Download the infographic above to use when inspiration becomes hard to find!

Hopefully, our interview with Shiva gave you some useful pointers to take your conversion strategy in the right direction! What advice resonated most of all?

Be sure to stay tuned for our next interview with a CRO expert who takes us through even more advanced strategies! And if you haven’t already, check out our interviews with Gursimran Gujral of OptiPhoenix, Haley Carpenter of Speero, Rishi Rawat of Frictionless Commerce, Sina Fak of ConversionAdvocates, and Eden Bidani of Green Light Copy!