Testing Mind Map Series: How to Think Like a CRO Pro (Part 27)

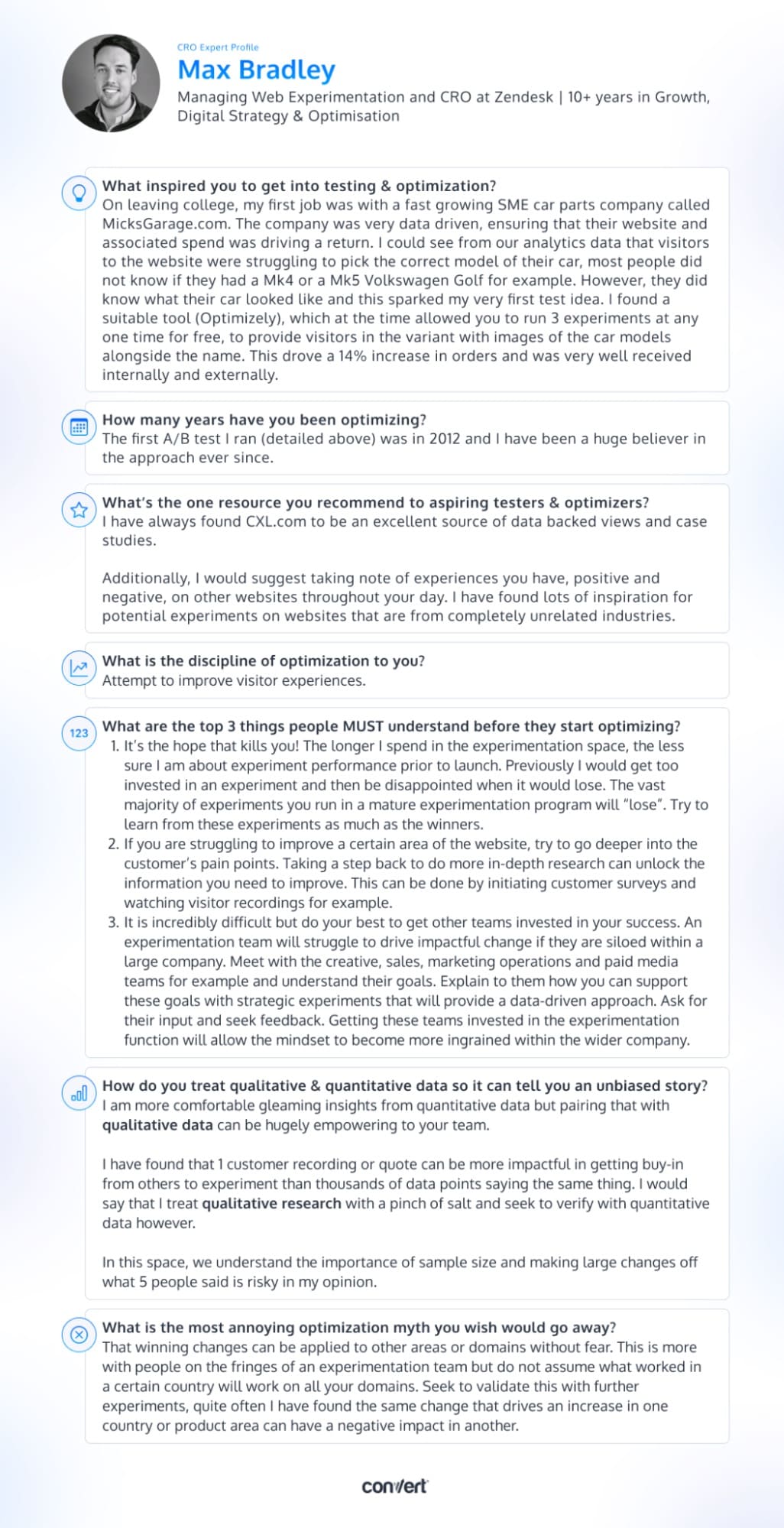

Interview with Max Bradley

Max Bradley, a veteran optimizer with 10 years of experience under his belt, knows that it’s not the success or failure of an experiment that defines success in optimization but rather the lessons learned along the way. By embracing losses and using them as an opportunity for growth and learning, optimizers can continually improve their strategies and drive true success.

Read on to discover Max’s take on the ups and downs of running experiments, the value of in-depth customer research, and the importance of getting creative with cross-team collaboration.

Max, tell us about yourself. What inspired you to get into testing & optimization?

On leaving college, my first job was with a fast growing SME car parts company called MicksGarage.com. The company was very data driven, ensuring that their website and associated spend was driving a return. I could see from our analytics data that visitors to the website were struggling to pick the correct model of their car, most people did not know if they had a Mk4 or a Mk5 Volkswagen Golf for example. However, they did know what their car looked like and this sparked my very first test idea. I found a suitable tool (Optimizely), which at the time allowed you to run 3 experiments at any one time for free, to provide visitors in the variant with images of the car models alongside the name. This drove a 14% increase in orders and was very well received internally and externally.

How many years have you been optimizing?

The first A/B test I ran (detailed above) was in 2012 and I have been a huge believer in the approach ever since.

What’s the one resource you recommend to aspiring testers & optimizers?

I have always found CXL.com to be an excellent source of data backed views and case studies.

Additionally, I would suggest taking note of experiences you have, positive and negative, on other websites throughout your day. I have found lots of inspiration for potential experiments on websites that are from completely unrelated industries.

Answer in 5 words or less: What is the discipline of optimization to you?

Attempt to improve visitor experiences.

What are the top 3 things people MUST understand before they start optimizing?

- It’s the hope that kills you! The longer I spend in the experimentation space, the less sure I am about experiment performance prior to launch. Previously I would get too invested in an experiment and then be disappointed when it would lose. The vast majority of experiments you run in a mature experimentation program will “lose”. Try to learn from these experiments as much as the winners.

- If you are struggling to improve a certain area of the website, try to go deeper into the customer’s pain points. Taking a step back to do more in-depth research can unlock the information you need to improve. This can be done by initiating customer surveys and watching visitor recordings for example.

- It is incredibly difficult but do your best to get other teams invested in your success. An experimentation team will struggle to drive impactful change if they are siloed within a large company. Meet with the creative, sales, marketing operations and paid media teams for example and understand their goals. Explain to them how you can support these goals with strategic experiments that will provide a data-driven approach. Ask for their input and seek feedback. Getting these teams invested in the experimentation function will allow the mindset to become more ingrained within the wider company.

How do you treat qualitative & quantitative data so it tells an unbiased story?

I am more comfortable gleaming insights from quantitative data but pairing that with qualitative data can be hugely empowering to your team.

I have found that 1 customer recording or quote can be more impactful in getting buy-in from others to experiment than thousands of data points saying the same thing. I would say that I treat qualitative research with a pinch of salt and seek to verify with quantitative data however.

In this space, we understand the importance of sample size and making large changes off what 5 people said is risky in my opinion.

What is the most annoying optimization myth you wish would go away?

That winning changes can be applied to other areas or domains without fear. This is more with people on the fringes of an experimentation team but do not assume what worked in a certain country will work on all your domains. Seek to validate this with further experiments, quite often I have found the same change that drives an increase in one country or product area can have a negative impact in another.

Download the infographic above and add it to your swipe file for a little dose of inspiration when you’re feeling stuck!

Max’s insights into the world of CRO have been nothing short of refreshing and eye-opening. We hope this interview has given you some valuable insights and advice on how to experiment more effectively.

What advice resonated most with you?

Check back twice a month for upcoming interviews! And if you haven’t already, check out our past interviews with CRO legends Gursimran Gujral, Haley Carpenter, Rishi Rawat, Sina Fak, Eden Bidani, Jakub Linowski, Shiva Manjunath, Deborah O’Malley, Andra Baragan, Rich Page, Ruben de Boer, Abi Hough, Alex Birkett, John Ostrowski, Ryan Levander, Ryan Thomas, Bhavik Patel, Siobhan Solberg, Tim Mehta, Rommil Santiago, Steph Le Prevost, Nils Koppelmann, Danielle Schwolow, Kevin Szpak, Marianne Stjernvall, and our latest with Christoph Böcker.

Written By

Max Bradley

Edited By

Carmen Apostu