Testing Mind Map Series: How to Think Like a CRO Pro (Part 17)

Interview with Bhavik Patel

As the Head of Product Analytics at Hopin and the founder of CRAP Talks (Conversion Rate Optimisation, Analytics & Product Talks), Bhavik Patel knows a thing or two about what it takes to implement a successful optimization campaign.

In this interview, Bhavik advocates for the importance of critical thinking in conversion rate optimization.

To be successful in CRO, you must be able to recognize biases in experiments and separate signal from noise in results.

Without these skills, it’s all too easy to make decisions that hurt rather than help.

However, with a rigorous and evidence-based approach, you can set yourself up for success.

Let’s dive into Bhavik’s recommendations…

Bhavik, tell us about yourself. What inspired you to get into testing & optimization?

I think, like most people, I just fell into the field of experimentation. I graduated university with a degree in Mathematics and I went looking for any job that was even remotely related to my field of study. I didn’t want to teach and I didn’t want to work in finance but at the time those seemed like my only options. I stumbled into a performance marketing role at a media agency that turned out to be numbers-focused. You’re constantly optimising for ad-spend, ROI, CPAs, click-through rates, and conversion.

But it was only when I moved into an in-house role a few years later and started doing product analytics and site optimisation that I properly discovered the field of “experimentation”. Back then, there was none of the literature that exists now on the topic so you sort of just had to learn through trial and error. I threw myself back into statistics to ensure that I was doing “proper” AB Testing. Every time I came across a new concept, I devoured as much content as I possibly could to ensure that I not only understood it, but could explain it to someone else without the technical jargon.

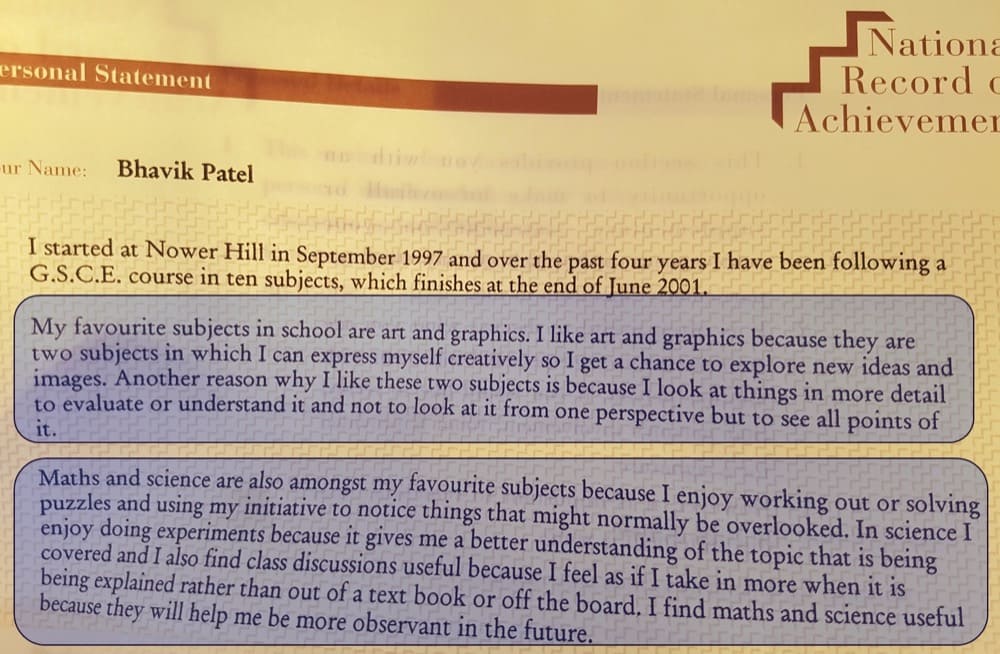

A few years ago, I dug out my national record of achievement and a personal statement I had written back in 2000 (I would have been 15 back then) and I realised that 22 years on, nothing has changed. I still paint and draw, I still like doing experiments and using maths to help me understand the world and I still learn better through discussions than out of a book.

How many years have you been optimizing for? What’s the one resource you recommend to aspiring testers & optimizers?

I would say I’ve been doing conscious optimisation since 2012, so for about 10 years.

As far as resources go, honestly, I don’t think there’s any one resource. If you have to force an answer out of me I would say it’s your brain. Your ability to learn and think are the best resources you have. To be able to sit and contemplate what’s in front of you and understand how it can be applied to your domain. To recognise the biases in your experiments, separate the signal from the noise in your results. I spend a lot of time thinking. So for me, the best resource is your brain.

Answer in 5 words or less: What is the discipline of optimization to you?

To distinguish facts from opinions.

What are the top 3 things people MUST understand before they start optimizing?

- Statistics

- Their company’s unit economics

- Their customers

How do you treat qualitative & quantitative data so it tells an unbiased story?

This is an interesting question. You can’t guarantee it, right? You can only hope that one supports the other to minimise your likelihood of bias. If they do, then the probability of bias in your interpretation is low. If they don’t, then your interpretations are wrong, the data is incomplete or the story is biased.

I also think the point at which you form your hypotheses will determine how biased your story is. If you collect data, and then make a hypothesis and share it as a fact without retesting the hypothesis, then you’re manipulating the story.

What kind of learning program have you set up for your optimization team? And why did you take this specific approach?

I’ve never really worked with a centralised optimisation team, therefore no learning program.

I prefer a hub and spoke model where analysts are embedded into multidisciplinary teams who are collectively solving the same problem. The team comes up with ideas they want to test and the analyst plays the role of a moral compass to ensure they don’t steer off the course of experimental righteousness. This happens through discussions, centralised documentation, best practices, retros, stand-ups, etc… It’s not a program, it’s a process.

What is the most annoying optimization myth you wish would go away?

At the risk of pissing off people – I think this myth that CRO is a job title or a function.

It’s not, it’s a skillset, one that any team or person can learn. I see “experts” who continuously peddle this idea that only they have the knowledge to do what they do. In reality, “CROs” lack the deeper domain-level knowledge of a marketing team, product team, finance team, customer service team, etc… to take experimentation beyond surface-level testing.

Thanks to Bhavik for sharing so many valuable insights! Save the abridged version of the interview below in your swipe file for when inspiration is hard.

Hopefully, our interview with Bhavik will help guide your experimentation strategy in the right direction!

What advice resonated most with you?

Keep an eye out for our next interview with a CRO expert who takes us through even more advanced strategies!

And if you haven’t already, check out our interviews with CRO legends Gursimran Gujral, Haley Carpenter, Rishi Rawat, Sina Fak, Eden Bidani, Jakub Linowski, Shiva Manjunath, Deborah O’Malley, Andra Baragan, Rich Page, Ruben de Boer, Abi Hough, Alex Birkett, John Ostrowski, Ryan Levander, and our latest with Ryan Thomas.