Can A/B Testing Hurt Your Business? 4 Ways Using the Wrong A/B Testing Tool Can Hurt Your Business

Don’t ignore your A/B testing tools. They’re too critical for your business to dismiss as just another Martech product in your tech stack.

How you allocate marketing budget, the amount of traffic your site gets, the size of your operating overhead, and the possibility of getting slapped with a 20-million-euro fine has a lot to do with your choice of testing tool.

So, pay attention to this.

Here are some ways the wrong A/B testing tool is either making your company lose money or risk losing money:

Loss of Focus on Your Optimization Goals

All-in-one tools are convenient — if you have a need for all those features. But the thing is, you most likely don’t. In fact, a 2019 Gartner study showed that 42% of marketers in the UK and North America underutilize their marketing stack.

So, you see, from a vendor’s point of view, lots of new features are exciting. They’re great for marketing. But when it comes to the task an optimizer actually needs the tool for, what features are available?

How easy will it be to navigate the cluttered UI of a tool that tries to do everything but does nothing very well?

When you get such tools, there’s usually a more interesting catch: The base plans come packed with features that help you gather qualitative data. But when it comes to setting up and running a test, you’re stuck because the features you need are now held behind a more expensive plan.

Sometimes that’s the enterprise plan. “Well, let’s just shell out the extra bucks.” Another catch there: The pricing is custom and now you have to get on the phone with the sales team to figure out how much you’ll pay. Which ends up running into five figures.

And that’s not all…

All-in-one testing platforms reduce the decision-making power of A/B testing. Because users are encouraged to depend on heatmaps, sessions recording, etc. At the end of the day, these don’t help you make better marketing or development decisions.

Take heatmaps, for instance, they do not tell you exactly where a user’s attention is, just where the mouse pointer is. That’s why Peep Laja called it, “a poor man’s eye-tracking tool.”

Trying to correlate how people use their mouse with where they’re paying attention leads to inaccurate judgment.

Research by Google showed:

- 94% of people showed no vertical correlation between mouse movement and eye-tracking

- 81% showed no horizontal correlation between mouse movement and eye-tracking, and

- 10% of people hovered over a link and moved on to read other things on a page

Ask yourself right now: Is your eye where your mouse pointer is?

Heatmaps aren’t proven to help with measuring and representing risk. And certainly not decision-making. They’re actually proven to cause errors and confusion.

So, in the end, it isn’t even useful qualitative data. It wastes investment and slows down goal achievement.

What you need to be more productive and efficient is a tool that does what you want without clutter. Gartner also proved this by showing that marketers who used an integrated suite approach to their marketing technology were only 28% effective in achieving marketing goals compared to 45% for marketers who chose tools tailored exactly to their unique needs.

You want an A/B testing tool that equips you and your team with all the features you need to execute complex tests from start to finish — in your existing plan.

And that’s what CRO agency, Mintminds, did when they were challenged with improving the product page of an eCommerce brand.

They assessed heatmaps and found people paid attention to certain sections on the page. They used this to develop 4 variants of the page that made it easier to access the info the data showed people were looking for.

Then they set up the test on Convert Experiences and ran it. The result? None beat the control but instead, all 4 dropped conversion rates.

The next thing they did was turn to user research. From the insights they mined, they created a new variant for the page with a different hypothesis this time. They ran it against the control for 28 days and got a different result.

Add to Cart rate was 13% more than the control and order conversion rate went up by 4.96%, boosting revenue per visitor by 6.58%.

As the headline on the full story’s page says, “Data without context is misleading.”

Loss of Valuable Traffic

Just like foot traffic is important to brick and mortar stores, web traffic is vital to online companies. There are over 4.5 billion people using the internet worldwide. These people are the target of most marketing actions online. Companies use SEO and PPC to entice web traffic to their websites, hoping to sell to them. These techniques are so effective and in demand that PPC expenditure surpassed $10 billion in 2017 while SEO services are expected to hit $80 billion this year in the US alone!

Chances are your team is using either SEO, PPC, or even both to drive traffic to your website. Your efforts and resources expended on driving traffic may all be in vain, if you’re using the wrong A/B testing tool.

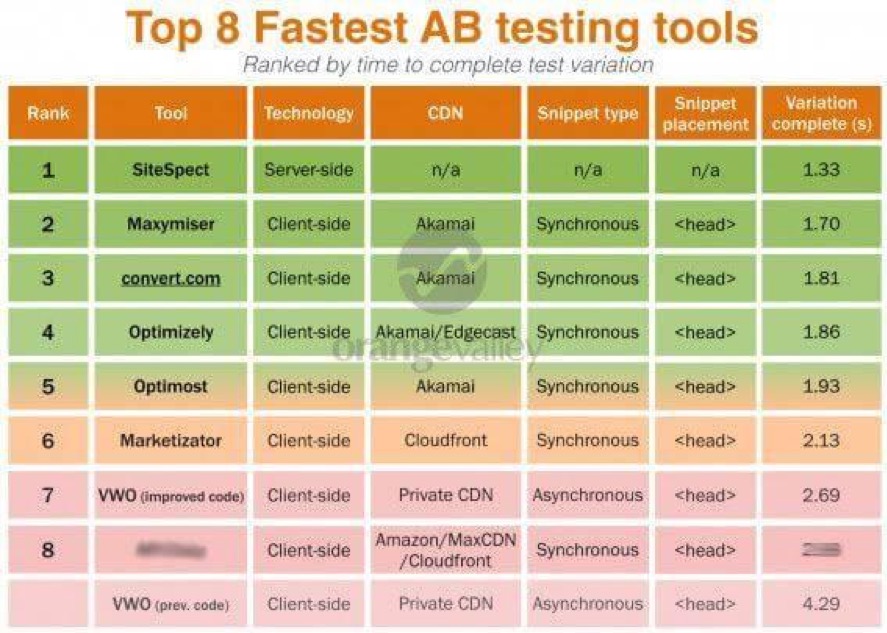

A/B testing tools are not created equal. Some may impact your website’s load time.

Page speed is important as it affects conversions, organic rankings and user experience. When testing on a page, your A/B testing tool can increase page load time. Because of the extra script on your page and the time to serve new variants.

A Google study found that a second delay in mobile page speed can reduce conversions by as much as 20%. With over 3 billion people using their smartphones to access the internet, faster mobile page speed is more important than ever before.

Another area where your A/B testing tool could cost you hard-earned traffic is flash of original content (FOOC).

This is also known as flickering in testing. It occurs when visitors to test pages see the original page before the test variation is loaded. It happens in a flash.

Some testing tools load their script asynchronously to reduce their impact on your page speed. But this can cause flickering. Visitors will leave if they keep experiencing this, as no one enjoys being tested on without permission.

And if they noticed the flash but didn’t leave, it could affect the integrity of the test as they’re now aware of what’s going on.

A/B testing tools like Convert Experiences with its SmartInsert™ Technology not only eliminates flicker, but also has little impact on page load speed.

This is how it works: Flicker-causing A/B testing tools apply the changes in the visitor’s browser while the webpage is loading the changes through the A/B testing tool script installed on the site.

Meanwhile, the technology we developed is loaded as a layer between the browser and the website. This way, the changes are visible while the original content is hidden.

When the original content loads faster than the variant, you get a flicker. This means the wrong version hit the finish line first.

It takes only 13 milliseconds for the human brain to identify images, according to MIT neuroscientists. Flicker can last as long as 100ms to a whole second.

With Convert Experiences, the version you want always hits the finish line first. No flicker testing so you don’t lose traffic and hurt your test results.

Increased Operating Costs

Whether a Fortune 500 company or a mom & pop shop, keeping operating costs down is a goal of every business. Be it reducing unproductive time, interruptions or labor costs, businesses want to keep overhead costs down.

This can be hard to accomplish when you spend hours weekly trapped on the phone trying to reach the right support agent to fix an issue with your testing tool. The time spent on calls to support reduces your overall productivity and costs your company money. Gallup reports that unproductivity costs companies $7 trillion globally every year.

Labor costs contribute to 70% of operation costs for businesses. Every time you pull a developer away from their responsibilities and tie them up with complicated testing tool set-ups, you are driving up operation costs. Think about overtime pay spent so devs can work on integrating your new CRO tool with your current Martech stack.

A testing tool that requires extensive dev resources right out of the box with unresponsive support agents will increase your operating costs significantly.

What your strategy should be instead is to spend reasonably on tools and invest the rest of your budget in a strong optimization team. Your A/B testing tool shouldn’t cost $100,000 a year.

Tools are only that, tools. Without someone to use it, it could as well sit in a show glass. Most optimizing experts agree on this and that’s why there’s an entire #TeamOverTools campaign in the LinkedIn CRO community.

Optimizers can produce revenue-driving results with a robust enough tool like Convert Experiences, which comes with 4X faster support to help your team do even better.

Instead of a 6-figure yearly subscription for A/B testing tools, invest your funds back in the team. Invest in the people who create your hypothesis, advance your culture of experimentation, and use the tools to extract insights that drive your business decisions.

The tool can only be as good as the person using it. Of course, tools are important but tools alone don’t run your optimization program. People do. You will be nowhere without people.

Privacy and Data Risks

The world is more privacy-conscious today. Laws and regulations have sprung up to defend the privacy of technology users.

The top 3 influencing decisions today and causing major shifts in the tech industry’s way of handling data and analytics:

- General Data Protection Regulation (GDPR)

- Privacy and Electronic Communications Directive on Privacy and Electronic Communications, otherwise known as ePrivacy Directive

- California Consumer Privacy Act (CCPA)

In A/B testing, for instance, the GDPR has clarified that everyone processing the data of EU citizens anywhere in the world has to comply with the GDPR. You have to obtain consent before processing any of this data, state the purpose of the data processing, and make it easy to withdraw this consent.

In practical terms, this will

- affect the amount of data you can collect for A/B testing,

- shorten cookie lifetime storage limit,

- limit test population to those who don’t have “Do Not Track” activated on their browsers, and more.

Also, the ePrivacy directive requires anonymity of visitor’s ID, not storing and processing personal data of end users, request consent for targeting geographical locations and timezones, etc.

Of course, this poses some major concerns as the fines for defaulting feel way more than a slap on the wrist.

The figure goes up when broken down on a country-by-country basis. In the US, the cost to a business that experiences a data breach is $8.19 million.

With the EU’s data protection law (GDPR) in place, the cost of a data breach is now much higher. Companies who process any data from EU citizens will have to comply fully with GDPR lest they risk steep fines.

In 2019, France’s data protection agency fined Google $57 million for data breaches. Just recently in Spain, a small cafe was fined €1,500 for violating a section of GDPR by the AEPD, Spain’s data regulatory agency.

Experimentation tools collect data about visitors seeing your variations.

Is this data anonymized? Does it fully comply with GDPR standards?

If your A/B testing tool collects and stores personally identifiable information about visitors (especially from the EU), it can open your business up to fines, which may range from thousands to millions of dollars.

A GDPR compliant testing tool is the only way to be sure that your business faces no liability in collecting, storing and handling visitors’ data in the event of a data breach.

Right now, you don’t have to get consent before A/B testing. But don’t cross your fingers and pray for that not to happen. If you’re sensing it’s time to stay on the safe side of privacy laws, make the shift to Convert.

Convert Experiences is the leader in experimentation privacy. So, you can count on our tool to comply with all regulations and adapt to the changes in privacy regulations. As we walk into a cookie-less future, get a testing tool that’ll grow with your team and keep your optimization program in tip-top condition.

Conclusion

The wrong A/B testing tool doesn’t just cost your company money, it can also cost you time and open your company up to liabilities. If you find yourself in that situation, you should switch to a better tool. A tool that you can tailor to your needs with minimal impact on page speed and 4 times faster than industry average support response is what you need.

That tool has a name: Convert Experiences. Try it for 15 days for free. No credit cards required.

Written By

Nneka Otika, Uwemedimo Usa

Edited By

Carmen Apostu