13 Best A/B Testing Tools for Developers Who Want Full Control of Their Experiments

This roundup covers the top A/B testing tools built for developers, from open-source frameworks you can self-host to enterprise platforms with mature APIs.

Each one is reviewed with a focus on SDK coverage, integration depth, pricing, and real fit for engineering teams.

What Developers Look for in an A/B Testing Tool

When we spoke to developers about their A/B testing needs, here’s what consistently came up:

- SDK and API coverage: Real, well-documented SDKs for Node, Python, Java, Swift/Kotlin, React, Vue, and more. Not just “paste this snippet.”

- Experiment-as-code: Configs you can version in Git, push through CI/CD, and review like the rest of your code.

- Performance-first delivery: Lean scripts, edge/CDN assembly, and zero flicker. No trade-offs that wreck Core Web Vitals.

- Debuggability: Logs, QA tokens, and preview modes that make it obvious why a variation didn’t fire.

- SPA and server-side support: Experiments that work across React, Vue, Next.js, and backend logic, not just static pages.

- Data alignment: Clean integrations with GA4, Mixpanel, warehouses, and session replay. Engineers don’t want two dashboards telling different stories.

- Privacy and compliance: First-party identity, consent-aware APIs, and self-hosting options where needed.

- Docs and support: Task-focused docs with runnable examples; support that answers dev questions, not just marketer FAQs.

The deal-breakers are just as important. Developers will push back instantly against heavy, blocking scripts that hurt performance, black-box targeting logic that can’t be debugged, or tools that leave them explaining data mismatches to stakeholders. And if configs are locked away in a dashboard with no path through CI/CD, most engineers won’t even entertain the tool.

A/B Testing Platforms for Developers: Complete Comparison Table

| Tool | Best For | SDK / API Support | Experiment-as-Code |

Export Events / Metrics |

Pricing |

||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Convert Experiences | Privacy-first, enterprise-grade experimentation with strong APIs | ✅ | ✅ | ✅ | $299/mo | ||||||||||||||||||||||||||||||

| Optimizely Full Stack | Enterprise teams running large-scale, server-side tests | ✅ | ✅ | ✅ | Custom/contact sales | ||||||||||||||||||||||||||||||

| LaunchDarkly | Advanced feature flagging and controlled rollouts | ✅ | ✅ | ✅ | Tiered / contact sales | ||||||||||||||||||||||||||||||

| GrowthBook | Open-source experimentation platform with flexible hosting | ✅ | ✅ | ✅ | Free / $40/user | ||||||||||||||||||||||||||||||

| Statsig | Engineering-led teams needing scalable, warehouse-integrated testing | ✅ | ✅ | ✅ | Free tier + usage-based | ||||||||||||||||||||||||||||||

| VWO FullStack | Teams who want both marketer-friendly UI and developer APIs | ✅ | ✅ | ✅ | Module/custom pricing | ||||||||||||||||||||||||||||||

| ABsmartly | Broad SDK coverage and high-performance server-side testing | ✅ | ✅ | ✅ | Custom/contact sales | ||||||||||||||||||||||||||||||

| Adobe Target | Enterprises standardizing on Adobe’s marketing stack | ✅ | ✅ | ✅ | Enterprise pricing | ||||||||||||||||||||||||||||||

| Amplitude Experiment | Product teams tying analytics directly into experimentation | ✅ | ✅ | ✅ | Free / $49/mo | ||||||||||||||||||||||||||||||

| PostHog | All-in-one open-source experimentation and product analytics tool | ✅ | ✅ | ✅ | Free up to 1M events, then usage-based | ||||||||||||||||||||||||||||||

| Kameleoon | Privacy- and compliance-sensitive product teams | ✅ | ✅ | ✅ | Free / $495/mo | ||||||||||||||||||||||||||||||

| Eppo | Data teams running warehouse-native experiments | ✅ | ✅ | ✅ | Custom/contact sales | ||||||||||||||||||||||||||||||

| Firebase A/B Testing | Mobile app developers using Remote Config | ✅ | ✅ | ✅ | Included in Firebase / free tier |

How We Chose Each Tool

Experimentation is no longer conversion rate optimization. It is the decision-making DNA of organizations, and tests are run guided by product teams that tend to collaborate even more closely with developers and engineers.

At Convert, we speak with over a hundred professionals every year to bring fresh research and insights to the experimentation and analytics space. To shortlist the tools for this round-up, we spoke to experimentation leads in growth & product. We ran their requirements by developers and took stock of the gap between what the strategy makers recommend and what the executors (developers) need.

We found that devs care about the ability to inject code, data quality and segregation, SPA handling, lean snippets, zero flickering, stable, end-to-end visitor tracking, and powerful API and MCP server access.

The Best A/B Testing Software for Developers

- Convert Experiences: Best for privacy-first, enterprise-grade experimentation with strong APIs

- Optimizely Full Stack: Best for enterprise teams running large-scale, server-side tests

- LaunchDarkly: Best for advanced feature flagging and controlled rollouts

- GrowthBook: Best open-source experimentation platform with flexible hosting options

- Statsig: Best for engineering-led teams needing scalable, warehouse-integrated testing

- VWO FullStack: Best for teams who want both marketer-friendly UI and developer APIs

- ABsmartly: Best for broad SDK coverage and high-performance server-side testing

- Adobe Target: Best for enterprises standardizing on Adobe’s marketing stack

- Amplitude Experiment: Best for product teams tying analytics directly into experimentation

- PostHog: Best all-in-one open-source experimentation and product analytics tool

- Kameleoon: Best for privacy- and compliance-sensitive product teams

- Eppo: Best for data teams running warehouse-native experiments

- Firebase A/B Testing: Best for mobile app developers using Remote Config

Do you want to explore more A/B testing tools? Check out our curated Top A/B Testing Tools for Actionable Marketing Insights.

1. Convert

Who this tool is for: Lean, medium-sized product and dev teams looking for full-stack experimentation and flexible feature flagging capabilities with strong APIs and SDKs.

Pricing: Paid plans start at $299/month (billed yearly) for up to 100K tested users, with all features included. Convert offers a 15-day free trial (no credit card required).

What is Convert?

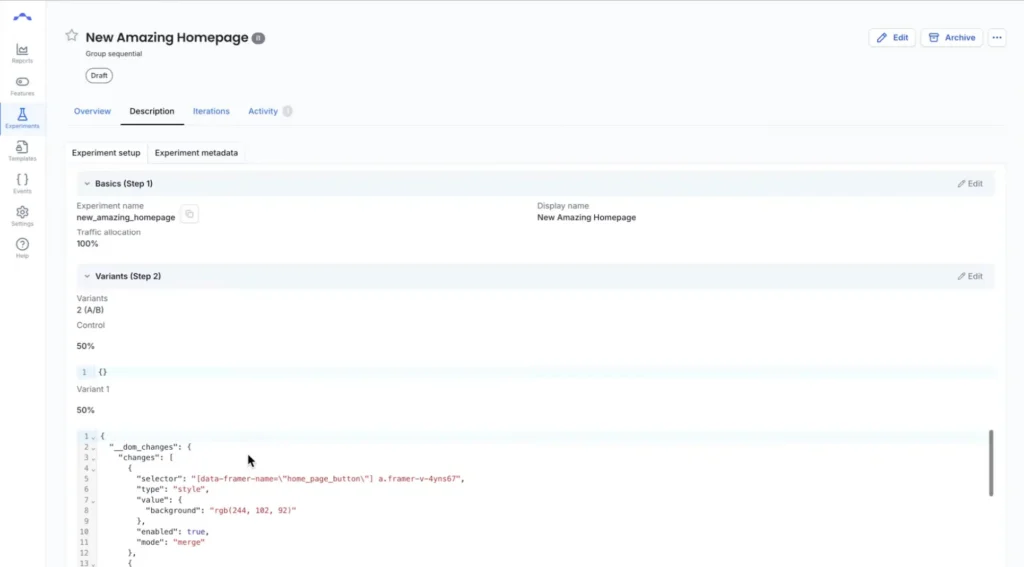

Convert is a full-stack experimentation platform that gives developers and product teams full control over how experiments are delivered, tracked, and scaled across modern stacks. It supports client-side and server-side testing, multivariate experiments, and SPA.

What are Convert’s Top Features?

- Full-stack SDKs and edge delivery: Open-source JavaScript SDK plus backend support for server-side and edge experiments with deterministic bucketing, zero flicker, and ad-blocker resistance.

- REST APIs and experiment-as-code workflows: Automate experiment creation, goal tracking, and reporting via APIs. Manage experiments in Git, align with staging/production environments, and integrate into CI/CD pipelines.

- SPA support: Experiment with the modern frontend, with built-in support for React, Vue, and dynamic apps, including route-change detection, continuous activation, and manual refresh control for custom logic.

- Feature flags and controlled rollouts: Gradual rollouts, kill switches, and percentage gating to release features safely.

- Advanced targeting and dynamic conditions: Combine behavioral, geo, device, and custom data with JavaScript conditions that resolve asynchronously.

- Integrations and data control: Native integrations with GA4, Segment, Mixpanel, Amplitude, and BigQuery, plus lifecycle events and client-side data access for syncing experiment data with internal systems.

- MCP support: Control experiments directly through Claude, Cursor, or any MCP-compatible AI assistant. Configure API credentials and use natural language to manage tests, pull reports, and analyze performance.

Convert’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Lightweight experimentation script and no flicker ● Flexible APIs and SDK options ● Reliable targeting and segmentation logic ● Responsive and capable support team for complex implementation |

● Documentation for edge frameworks lag behind ● Some advanced integrations require manual configuration ● Deep technical work required for complex goal tracking setups |

|---|

Why Convert is a Fit for Developers

Convert emphasizes flexibility and speed without adding integration headaches. Developers can code experiments directly, automate workflows via APIs, connect results into existing pipelines, and even manage everything conversationally through AI assistants using Convert’s MCP integration.

Debuggability is built in with Live Logs and QA tokens, making it easier to trace why a variation did or didn’t fire. Its infrastructure is optimized for reliability and performance, ensuring experimentation runs without impacting user experience or site metrics.

Why Do Companies Use Convert?

Engineering-led teams, SaaS companies, ecommerce brands, and agencies choose Convert because it balances developer control with scalability. Developers highlight the SDK and API coverage, CI/CD compatibility, and strong support for privacy regulations.

Teams also value Convert’s predictable, flat pricing and the ability to run enterprise-grade experimentation without unnecessary overhead.

If you need more control over how experiments are implemented, explore Convert’s developer-first testing platform.

What Users Say About Convert

Positive:

Based on my experience, they really make sure or do their best that they can be available most of the time. They also respond quickly and do their best to provide a solution/answer to your problem/inquiry.

One of the things I like about Convert is their constant goal to improve the platform, from features to fixes. From small to big changes.

Source: G2

Critical:

The only thing I notice for now is their documentation (although its updated now) still there are some topics that weren’t covered, like implementing Convert with Hydrogen-based apps for that matter.

Source: G2

2. Optimizely

Who this tool is for: Enterprise engineering and product teams running large-scale experimentation across web, mobile, and backend systems.

Pricing: Minimum entry point is reported to be $36K/year. You have to contact sales for your custom pricing and demo.

What is Optimizely?

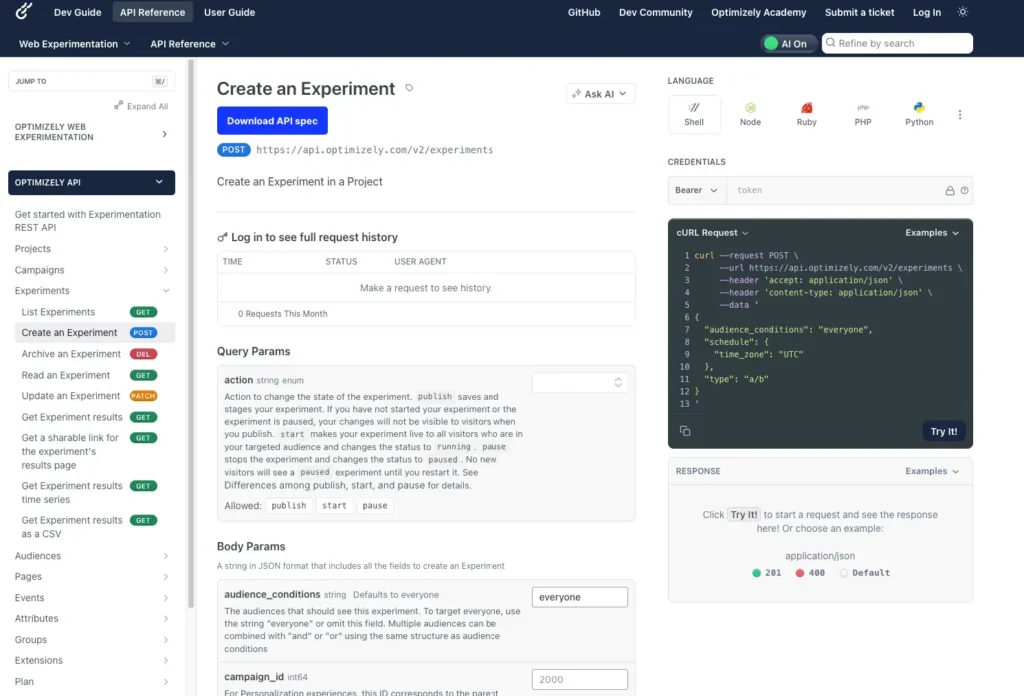

Optimizely combines client-side Web Experimentation and server-side Feature Experimentation into one platform. For developers, it provides SDKs across languages, REST APIs, a microservice “Optimizely Agent” option, and deterministic bucketing logic to ensure consistent variation delivery across systems.

What are Optimizely’s Top Features?

- Full-stack SDKs & feature flags: SDKs for Java, Python, Go, Node, iOS, Android, etc., with the ability to run experiments in backend services or client code.

- Microservice Agent API: The Optimizely Agent acts as a standalone service you can deploy behind a load balancer to centralize bucketing and decision logic across services.

- REST APIs for management: Feature Experimentation APIs allow managing flags, experiments, reports, and environments programmatically.

- Deterministic bucketing: Uses MurmurHash3 hashing with the user ID and experiment key to ensure consistent variation across SDKs.

- Performance and latency control: In-memory bucketing, agent-based decisioning, edge deployments, and flicker control for client-side experiments.

- GA4 integration for backend and frontend metrics: Supports integration with Google Analytics 4 via Report Generation, custom events, and audience sync.

Optimizely’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Mature experimentation infrastructure with robust SDK support ● Governance features for large engineering teams ● Advanced stats engine and experiment guardrails ● Reliable deterministic bucketing |

● Pricing is on the high-end ● Implementation can require significant engineering resources ● Contract-based pricing limits flexibility for startups |

|---|

Why Optimizely is a Fit for Developers

With Optimizely, engineers can manage experiments as code, embed them into CI/CD pipelines, and rely on SDKs rather than manual script injections.

The Agent microservice lets teams centralize decision logic across services without duplicating SDK instances.

Debugging support (forced decisions, QA audiences, logs) helps trace variation behavior. Its GA4 integration means experiment results can feed directly into analytics pipelines, reducing the friction of cross-platform reporting.

Why Do Companies Use Optimizely?

Larger engineering-driven organizations and enterprises choose Optimizely for its scale, stability, and depth of integration.

Users value the ability to run complex multi-stack experiments, integrate deeply into their data infrastructure, and hand off ownership to developers rather than intermediaries.

Cost, setup complexity, and contract rigidity are frequent drawbacks cited by users, but many teams see the tradeoff as worth it for reliability and control.

What Users Say About Optimizely

Positive:

I am using Optimizely to control which roles on my SaaS can see each feature. It’s very easy to control experimentation and feature flag. Its very simple to implement and to make changes to audiences and features. We use it every day in production, and it’s very easy to integrate with the JavaScript library.

Everything always worked fine, so we never need to call the customer support, so no feedbacks about that.

Source: G2

Critical:

While Optimizely is a great tool, it lacks some essential management features. I’d love to see better tools for flag management, such as an overview of all running, paused, and stale flags, the ability to label and tag flags, custom fields for adding extra details, and insights into flags running for too long.

Source: G2

3. LaunchDarkly

Who this tool is for: Engineering-led SaaS and product teams using feature flags, progressive rollouts, and production experiments to ship features safely.

Pricing: Starts free for feature flagging and experimentation. The pro plans start from $12 per service connection per month, with a 14-day free trial.

What is LaunchDarkly?

LaunchDarkly is a developer-centric feature management platform that lets you embed feature toggles and experiments into your application logic. It supports SDKs across client, server, edge, and mobile environments.

What are LaunchDarkly’s Top Features?

- Full-stack SDKs AND feature flags: SDKs across many languages (Java, Python, Go, Node.js, .NET, iOS, Android, etc.) supporting evaluation of flags in both backend and frontend contexts.

- Management API: Programmatically manage flags, environments, contexts, segments, and fetch data via LaunchDarkly’s API.

- Deterministic evaluation and context hashing: SDKs evaluate flags deterministically based on context attributes or keys, ensuring consistent variation assignment.

- Performance and delivery controls: SDKs stream updates, provide local caching, use Relay Proxy to reduce client overhead, and support offline or fallback modes.

- Governance and safety: Flag versioning, code reference detection, audit logs, approval workflows, rollback, and guardrails.

LaunchDarkly’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Extensive SDK coverage ● Gradual rollouts and instant kill switches features ● Strong governance features such as audit logs and approval workflows ● Strong reliability in production environments |

● Experimentation features aren’t the focus in LaunchDarkly ● Experiment analysis may require external analytics tools ● Some team report difficulty tracking stale flags |

|---|

Why LaunchDarkly is a Fit for Developers

Developers gravitate to LaunchDarkly because it offers a robust, mature infrastructure for feature control and experimentation without imposing a separate experimentation tool.

You can embed flags in your code, roll them out gradually, and instrument decisions, all while minimizing latency and overhead.

Why Do Companies Use LaunchDarkly?

Engineering-led organizations adopt LaunchDarkly when feature control, safety, and performance are non-negotiable. It’s often chosen by teams building software that must evolve dynamically, like in SaaS, consumer applications, and large distributed systems, where toggling features and experimenting live is essential.

What Users Say About LaunchDarkly

Positive:

I like how easy it is to navigate the UI, and that it lets me group flags, rollouts, and other categorizable resources however I want. It can be as simple or as complex as I need, depending on what I’m trying to do.

The change request approval system has been the biggest good change that I’ve used. It saves a lot of time that would otherwise be spent on private DMs on Slack.

Source: G2

Critical:

While LaunchDarkly offers robust capabilities as a feature management platform, it can become challenging to handle as the number of flags and environments increases. Teams that are new to feature flagging may face a learning curve, and keeping flags well-organized demands consistent effort and discipline.

Source: G2

4. GrowthBook

Who this tool is for: Data-driven product teams that want open-source experimentation and feature flags integrated with their warehouse and analytics stack.

Pricing: Starts free and remains free, unless you go for the pro plan, which starts at $40/user/month.

What is GrowthBook?

GrowthBook is an open-source experimentation and feature-flag platform built for engineering and data-savvy teams. It integrates directly with your data stack (e.g., BigQuery, Postgres, etc.), letting experiments live alongside your metrics.

What are GrowthBook’s Top Features?

- Full-stack SDKs and feature flags: Official SDKs are offered for many environments, such as Node.js, Java, Python, C#, Go, PHP, Ruby, Elixir, browser JS/TypeScript, React, Vue, mobile (Kotlin, Swift, Flutter), and edge (Cloudflare Workers, Lambda@Edge).

- Management API: GrowthBook exposes a full REST API for creating, updating, and fetching experiments, features, metrics, and environments programmatically.

- Deterministic bucketing and local evaluation: Variation assignment occurs locally in the SDK (or edge) based on user attributes and the experiment definition, eliminating reliance on real-time server calls for each decision.

- Performance: SDKs are built to be lightweight and efficient. The JS SDK supports streaming updates (SSE), so feature definitions can be updated in real time without polling.

- Analytics and metric agnosticism: Instead of forcing its own analytics engine, GrowthBook runs experiment results atop your data. That means experiment metrics, KPIs, or events you already track can be used directly in analysis.

GrowthBook’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Open-source platform with full transparency and control ● Warehouse-native analysis that works with your existing analytics pipelines ● Flexible deployment with self-hosting options ● Supports feature flags as well |

● Requires engineering effort to configure and maintain ● UI is not as polished as enterprise alternatives ● Smaller ecosystem compared to other testing tools |

|---|

Why GrowthBook is a Fit for Developers

GrowthBook aligns with how engineers want to work, i.e., experiments are code-driven, data lives in your own infrastructure, and there’s no black box.

You can version experiment setups, pull metrics from your warehouse, automate rollbacks or guardrails via API, and maintain consistency across backend and frontend contexts.

Because GrowthBook decouples evaluation from analysis, you’re not constrained by vendor dashboards; you plug it into your existing pipelines seamlessly.

Why Do Companies Use GrowthBook?

Teams choose GrowthBook for transparency, flexibility, and control. Because it’s open source, there’s no vendor lock-in.

It’s ideal for orgs already maintaining data warehouses that want to run experiments alongside their core analytics. Its breadth of SDKs and deployment options makes it a good fit for entities that span frontend, backend, and edge codebases.

What Users Say About GrowthBook

Positive:

It’s pretty straight forward if you know your way around other A/B testing tools. Does a really good job at that. Works well in our frontend as well as backend.

Their support is outstanding, we had an issue with our store and I was suspecting growthbook as one of the causes. Quick turnaround and hinting us the right direction did help us fix the real issue of a synchronous script from a third party not loading in time, something I had missed in the network tab myself.

Source: G2

Critical:

Documentation sometimes can be lacking, and very little is described about the `multi-org` setup which is present in the application.

Source: G2

5. Statsig

Who this tool is for: Startup and scaleup product teams running developer-first experimentation with feature flags, analytics, and warehouse-native metrics.

Pricing: Starts free. Pro plans are available from $150/month.

What is Statsig?

Statsig is a platform built for developers to manage feature flags, experiments, and metrics in code. It provides SDKs, APIs, and analytics tooling so that experimentation becomes part of your dev workflow, not an afterthought.

What are Statsig’s Top Features?

- SDKs & feature gates across environments: Client and server SDKs support many languages and runtime environments, enabling you to run experiments and feature logic natively.

- Local evaluation & low-latency flag checks: The SDKs use caching, polling, and efficient logic so decisions occur quickly without blocking your application.

- Event API and metrics ingestion: You can log custom events, exposures, and metric inputs directly from your code, which feed into your experiments’ metrics.

- Warehouse-native experiment computation: Use Warehouse Native to run analysis on your data warehouse (BigQuery, Snowflake, etc.), combining your own data pipelines with Statsig’s measurement logic.

- Edge and infrastructure support: Integrations and low-overhead evaluation logic make Statsig viable even at the edge or in microservices without heavy latency impact.

Statsig’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Developer-first platform for feature flags and testing ● Strong SDK coverage ● Free tier makes it available to startup teams |

● Analytics dashboards are less customizable than dedicated BI tools ● Some users report that collaboration features need more work |

|---|

Why Statsig is a Fit for Developers

Developers gravitate to Statsig because it respects the craft: experiments live in code, metrics flow through your pipelines, and performance is first class.

You can version your experiments, integrate them with CI/CD, and analyze using your own data stack, rather than relying on a tool that imposes its own boundaries.

Why Do Companies Use Statsig?

Engineering-led teams adopt Statsig when they want to collapse the barrier between product logic and experimentation. It’s suited for architecture-heavy environments (microservices, edge, serverless) and teams that prefer to build experimentation in the same workflows as features.

What Users Say About Statsig

Positive:

With Statsig, the set-up of the system to your platform-of-interest is incredibly easy through the use of their “Integrate Statsig SDK” with a wide range of options such as JavaScript and HTML.

There are a wide range of configurable features to ensure that the analytics collected provide maximum benefit and insight to the customer. One key use case is understanding how to improve the performance of the product and identify pain points for efficiency enhancements.

Source: G2

Critical:

One area that could be improved in Statsig is the workflow for sharing experiments or feature gates in development with colleagues. Although using overrides is an option, it can be somewhat cumbersome.

Source: G2

6. VWO

Who this tool is for: Mid-market-to-enterprise SaaS, ecommerce, and digital businesses where developers run backend experiments while growth teams manage frontend testing.

Pricing: Custom pricing. You have to contact sales for a demo and pricing. A 30-day free trial is also available.

What is VWO?

VWO’s Feature Experimentation is the engineering side of VWO that supports SDK-driven feature flags, experiments, and personalization logic in production code. It evolves from their full-stack product into a unified platform for both front-end and backend logic.

What are VWO’s Top Features?

- Full-stack SDKs and feature flags: Server-side and client-side SDKs in .NET, Go, Java, Node.js, PHP, Python, Ruby, Android, iOS, React Native, JavaScript, Flutter.

- REST APIs for management: VWO’s APIs let you manage campaigns, experiments, variants, metrics programmatically.

- Deterministic variation logic: SDKs use deterministic bucketing so the same user context leads to consistent variation assignment across environments.

- Low latency design: Asynchronous event delivery, event batching, efficient evaluation logic to minimize runtime impact.

- Analytic integration support: VWO supports pushing experiment data (variation, impression, metrics) into analytics layers and integrating with GA4 via its integrations and data export paths.

VWO’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Combines developer experimentation with visual testing capabilities ● SDKs support frontend and backend ● Comes with behavioral insight tools (heatmaps and session replays) |

● Pricing is not transparent ● Script performance issues occasionally reported heavy on pages ● Reporting customization options are limited |

|---|

Why VWO is a Fit for Developers

VWO gives devs control. You can embed experiments in backend logic or front-end code using SDKs, trigger events programmatically, and route data via callbacks into analytics systems like GA4.

The REST API lets you manage configurations as code, and the SDKs support environments where you need minimal latency or precise feature gating. Because VWO houses both front-end experiments and backend flagging, you don’t need multiple systems.

Why Do Companies Use VWO?

Teams adopt VWO when they want a single system that supports their entire experimentation lifecycle—from visual tests to backend feature rollout—with developer-friendly integrations.

What Users Say About VWO

Positive:

The ease of use of the tools. Implementation was seamless. The tools are simple to use for the business end user with the ability to easily create and preview experiences. Reporting is also robust and allows you to drill down into the data to develop insights. The quality of support is unmatched. It’s been an absolutely positive experience working with the VWO team.

Source: G2

Critical:

In some cases, the testing script can slightly delay page rendering.

Source: G2

7. ABsmartly

Who this tool is for: Enterprise engineering teams running coded experiments across backend services, mobile apps, and high-traffic platforms.

Pricing: Event-based pricing starting at €60K/year (~$70K). You have to contact sales for your custom pricing and a demo.

What is ABsmartly?

ABsmartly is an experimentation platform designed particularly for developers. It emphasizes coded experiments, SDKs, and deep API access rather than visual editors, supporting full-stack testing (web, mobile, backend) using feature flags and A/B experiments.

What are ABsmartly’s Top Features?

- Developer-first, API and SDK approach: All experiments are defined via code or APIs; there is no heavy reliance on a visual builder.

- Group Sequential Testing (GST): An adaptive statistical model enabling earlier decision-making without sacrificing power.

- Full-stack support (client and server): Able to run feature flags or experiments in backend services, web front ends, or mobile.

- Unlimited experimentation and segmentation: No fixed caps on the number of experiments, goals, segments, or users in many configurations.

- Low-latency or zero-flicker design: Claims of “zero-lag execution” and flicker avoidance for experiments.

- Raw data access and export: Full access to experiment data, integrations, and the ability to push data to BI or warehouses.

ABsmartly’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Fully developer-centric platform where experiments are defined in code ● Advanced statistical models like Group Sequential Testing ● No practical limits on experiments or segmentation |

● Documentation can be technical and dense ● Marketing will find it difficult this tool along with engineering ● Enterprise pricing puts it out of reach for many teams |

|---|

Why ABsmartly is a Fit for Developers

ABsmartly aligns with engineering workflows: everything is codified, versionable, and surfaced through APIs. Its GST model means tests can finish faster, reducing the cost of running experiments in heavy traffic environments. Its architecture also aims to avoid performance drag, flicker, or side effects.

Why Do Companies Use ABsmartly?

Teams using ABsmartly often value control, data ownership, and performance safety. It appeals to organizations that prefer to keep experimentation within their codebase rather than treating it as an external tool.

Because it avoids limits on experiment count or user volume in many setups, it’s suited for programs that scale heavily.

What Users Say About ABsmartly

Positive:

Our experience with ABSmartly has been extremely positive. The platform is robust and provides a comprehensive infrastructure for conducting experiments securely and efficiently, enabling our product and experimentation teams to scale testing initiatives without concerns about limitations.

Source: Gartner

Critical: No recent critical review found on G2.

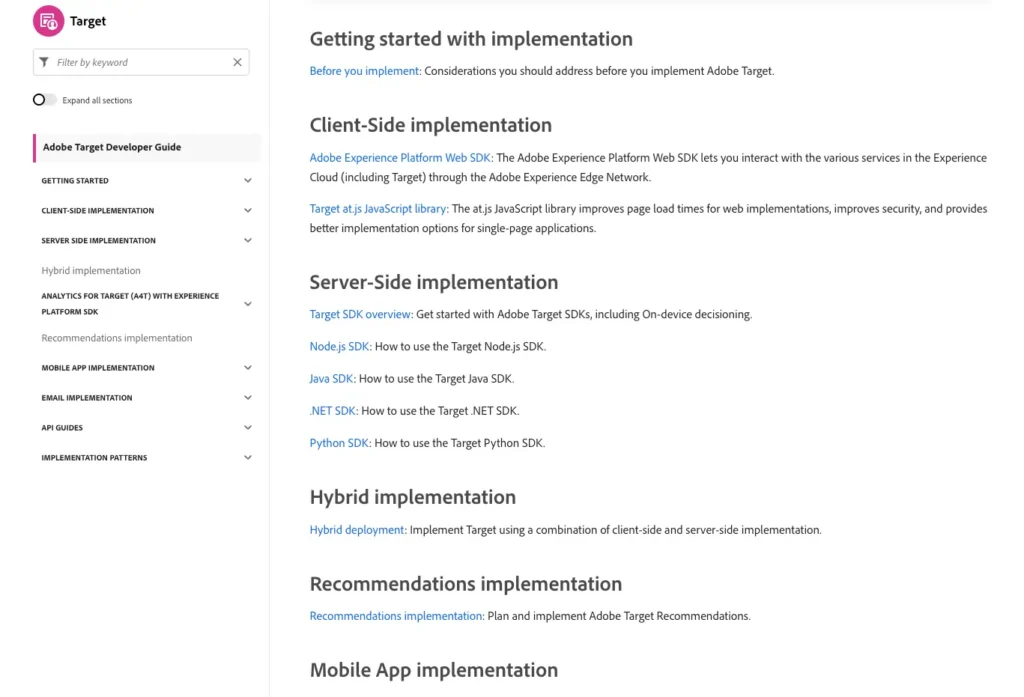

8. Adobe Target

Who this tool is for: Large enterprises already using Adobe Experience Cloud that need experimentation and personalization integrated with their marketing stack.

Pricing: Custom sales-led pricing.

What is Adobe Target?

Adobe Target is Adobe’s experimentation and personalization engine, exposed via APIs, SDKs, and integrations with the Adobe Experience Platform.

Developers use it to deliver personalized experiences at the edge, run experiments from backend services, and integrate variant decisions into application logic.

What are Adobe Target’s Top Features?

- Delivery API & SDKs for server-side: SDKs in Node.js, Java, .NET, Python allow delivering experiences outside the client, with support for caching to reduce latency.

- Hybrid and client-server implementation via Web SDK, at.js, Edge flows: You can combine client-side personalization with server-side decisions via Adobe Experience Platform Web SDK or at.js.

- On-device decisioning and rule artifacts: Adobe Target can push rule definitions to the client side (after on-device decisioning is enabled) to reduce reliance on server-side calls.

- Admin, reporting, profile APIs: Use the Admin and Profile APIs to manage activities, audiences, offers, retrieve user profile data, or fetch reporting programmatically.

- Edge and personalization integration with Adobe Experience Platform: Seamless integration with Adobe’s Experience Cloud, identity services, and analytics.

Adobe Target’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Powerful experimentation and personalization engine ● Highly scalable infrastructure for global brands ● Supports complex multi-channel experiments ● Deep integration with Adobe Experience Cloud |

● Steep learning curve for most users ● Expensive enterprise contracts ● Performance overhead can occur with complex configurations |

|---|

Why Adobe Target is a Fit for Developers

Developers get flexibility through SDKs, APIs, and hybrid logic. Experiments and personalization don’t need to live solely in the UI. You can embed decisions in your app server, combine them with other services, and honor performance constraints like cacheable responses and client-side rendering when necessary.

Why Do Companies Use Adobe Target?

Engineering organizations adopt Adobe Target when there’s already heavy use of the Adobe Experience Cloud and demands for scalability, governance, and interoperability.

When experiments need to run across web, mobile, email, or devices, and integrate with identity and analytics infrastructure, Target is often the choice.

What Users Say About Adobe Target

Positive:

I really value how easy it is to set up AB testing, making it possible for me to launch experiments both quickly and efficiently. The initial setup process is straightforward, and as I continue to use the platform, I find it becoming an even more dependable tool. Customer support is always accessible and very helpful, providing effective solutions whenever I encounter any issues.

Source: G2

Critical:

While Adobe Target is powerful, it can be quite complex to set up and configure, especially for teams without dedicated technical resources. The learning curve is steep, and some features feel overcomplicated. Additionally, it can be slow to load, and the interface could be more intuitive. Integration with non-Adobe products sometimes requires additional steps, and the pricing can be high for smaller businesses.

Source: G2

9. Amplitude Experiment

Who this tool is for: Product-led growth teams using Amplitude analytics who want experimentation tightly connected to product metrics and behavioral data.

Pricing: Starts free and pro plans start from $49/month.

What is Amplitude Experiment?

Amplitude Experiment is a developer-friendly experimentation and flag framework that lives beside Amplitude’s analytics library. It lets engineering teams embed variant logic directly into their applications, evaluate flags server-side or client-side, and tie experiments to analytics without maintaining a separate data stack.

What are Amplitude’s Top Features?

- SDKs and local/remote evaluation modes: Amplitude provides SDKs (JavaScript, Node.js, Python, iOS, Android, JVM, etc.) that support both remote fetch and local evaluation for lower latency decisioning.

- Unified SDK integration: The Browser Unified SDK lets you integrate analytics, experiments, and other features together so your codebase stays simpler.

- Feature flags and experiment logic in code: You can treat flags as both feature toggles and experiment variables, with rollout control, kill switches, and safe fallbacks.

- Statistical rigor and experiment metrics built in: Experiment outcomes come with significance calculation, confidence intervals, and baked-in reporting logic.

- Consistent variant evaluation across platforms: The hashing and variant logic are consistent across SDKs, so a user sees the same variant on mobile, web, and backend.

Amplitude’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Tight integration with product analytics and behavioral metrics ● Built-in statistical guardrails ● SDKs support experimentation across various environments ● Experiment analysis happens alongside product data |

● Less mature experimentation capabilities compared with dedicated testing platforms ● Some editing tools are still considered buggy by users |

|---|

Why Amplitude is a Fit for Developers

For engineering teams, the appeal is to build experiments as part of product logic rather than rely on external tooling. You can version flag logic, integrate with CI/CD, and access variant decision results directly in code. The unified model with analytics simplifies instrumentation and reduces the risk of mismatches.

Why Do Companies Use Amplitude?

Tech-forward organizations use Amplitude Experiment when they want the experimentation layer tightly woven into their product stack, not bolted on. It suits environments where experiments, flags, and data pipelines all must interoperate, especially in microservice or multi-platform setups.

What Users Say About Amplitude

Positive:

The web experimentation is incredibly intuitive and easy to use for a product manager. It was super easy to setup, and the ability to run my own experiments on content and images without having to involve my development team makes this tool a big win for our ability to scale. We run tests every 2 weeks without issue, a pace we’ve never hit before with other tools.

Source: G2

Critical:

The editor is still a little buggy. HTML editing is difficult to use, even for a developer (on Plus plan).

Source: G2

10. PostHog

Who this tool is for: Startup and engineering-led product teams that want experimentation, analytics, and feature flags in a developer-controlled platform.

Pricing: Starts free. Beyond the free tier, you’ve got usage-based pricing.

What is PostHog?

PostHog is an open-core analytics and experimentation platform made for engineers. It combines tracking, feature flags, A/B testing, and session replay into a single system you can control, host, and extend.

What are PostHog’s Top Features?

- Autocapture and rich SDKs: Automatically logs clicks, page views, and form submissions with minimal setup.

- HogQL Query Language: SQL-flavored query engine to run custom queries directly on event data, enabling advanced analysis without leaving PostHog.

- Feature flags and experimentation: Built-in flags enable safe rollouts, controlled rollbacks, and A/B/n tests, keeping experiment logic in code rather than in external dashboards.

- Session recordings and replays: Developers can watch user sessions tied to events or flags to debug, track errors, and validate how variants actually perform in prod.

PostHog’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Open-core platform combining analytics, feature flags, and experimentation ● Full access to event data and schema for custom analysis ● Developer-friendly SDKs and APIs ● Self-hosting option available |

● Setup and instrumentation require engineering effort ● Performance issues may appear with very large datasets ● Require data engineering maturity for full value |

|---|

Why PostHog is a Fit for Developers

Developers get the full stack to host analytics, run experiments, inspect recorded sessions, and write custom integrations, with open access to raw data.

PostHog’s SDKs, APIs, and modular architecture let engineering teams integrate experimentation into CI/CD, custom pipelines, or microservices without being constrained by a tool’s limitations.

Why Do Companies Use PostHog?

Teams adopt PostHog when they prefer to own their analytics and experimentation infrastructure rather than outsource it. It is especially appealing to startups and engineering-led companies that want flexibility, reduced tool bloat, and the ability to scale on their own terms.

What Users Say About PostHog

Positive:

We use PostHog daily for both web and mobile analytics. It gives us full control over event tracking, funnels, and feature flags without relying on multiple tools. I especially like the flexibility of defining custom events, running product experiments, and connecting everything directly to our data stack. It feels very developer-friendly compared to tools like Mixpanel or Amplitude.

Source: G2

Critical:

There is a bit of a learning curve at the beginning, especially when exploring some of the more advanced features. However, once you get familiar with the platform, it becomes much easier to use.

Source: G2

11. Kameleoon

Who this tool is for: Enterprise SaaS, fintech, and ecommerce teams needing full-stack experimentation with strong privacy and compliance controls.

Pricing: Starts free, and then you’ve got the next tier at $495/month. There’s a 30-day free trial.

What is Kameleoon?

Kameleoon is a unified experimentation, feature management, and personalization platform that supports both client-side and server-side experimentation. It offers visual tools and SDKs for web, mobile, and backend environments.

What are Kameleoon’s Top Features?

- JavaScript API (front-end): The Activation API handles variation rendering with anti-flicker support, consent logic (enableLegalConsent, disableLegalConsent), SPA support (enableSinglePageSupport), and dynamic refresh logic for DOM changes.

- Automation API (REST): Programmatically create or manage experiments, segments, goals, and configurations.

- Data API (REST): Retrieve experiment or visitor data for external systems, reporting, or custom workflows.

- Server-side SDKs for feature experimentation: Kameleoon supports SDKs for languages including Java, C#, PHP, Node.js, Ruby, Python, Go, and more, enabling backend experiments and consistent feature flag behavior.

- Privacy and consent integration: The Activation API includes built-in methods to respect legal consent, enabling modular activation depending on user consent status.

Kameleoon’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Strong full-stack experimentation capabilities ● AI-driven targeting and personalization features ● Good privacy and compliance features for regulated industries |

● Setups can take a long time for large experimentation programs ● Overkill for small teams ● Platform complexity requires training |

|---|

Why Kameleoon is a Fit for Developers

Kameleoon gives developers the control they need without sacrificing performance or privacy. Its SDKs across Java, C#, Node.js, Python, and more let you run experiments and feature flags on both backend and frontend.

Its built-in consent logic lets you conditionally enable experimentation based on user consent, which is especially valuable in regulated environments.

Because Kameleoon supports full-stack experimentation, you don’t need separate tools for UI and backend experiments; everything lives in one unified platform.

Why Do Companies Use Kameleoon?

Organizations adopt Kameleoon when they want an experimentation platform that also supports personalization and feature management under strong compliance guarantees. Brands that handle regulated data or face high privacy expectations value Kameleoon’s built-in consent controls and modular activation.

The AI features (predictive scoring, prompt testing) help cross-functional teams generate ideas faster. Plus, its support across SDKs and environments allows it to scale across web, mobile apps, and backend logic without fragmentation.

What Users Say About Kameleoon

Positive:

Kameleoon is an optimization solution that allows me to be completely autonomous in setting up and launching A/B tests/customizations to improve site navigation, notably thanks to PBX. In just a few minutes, simply by talking with the AI, I can turn ideas into tests. It is a real time saver and very easy to use. The results of the first tests launched via PBX are convincing.

Source: G2

Critical:

The absence of a macro dashboard within the tool, which would allow for a more comprehensive visibility of all the experiences and customizations configured in the tool.

Source: G2

12. Eppo

Who this tool is for: Data-mature product teams running experiments directly from their data warehouse and analytics infrastructure.

Pricing: Custom pricing. You reach out to the Eppo team for a demo and pricing.

What is Eppo?

Eppo is a modern experimentation and feature flag platform that emphasizes warehouse-native analysis and simplicity in SDKs. It is designed to integrate tightly with existing data stacks and let engineering own both experiment setup and analysis.

What are Eppo’s Top Features?

- Lightweight SDKs with universal interface: Eppo provides SDKs in many languages, all sharing a consistent API for flags, experiments, and configuration.

- Local evaluation and polling for config updates: Once SDK fetches configuration, variant evaluation happens locally (no network round trip per user).

- Warehouse-native analysis and metric definition: Experiment metrics and analyses live in the warehouse (e.g., Snowflake, BigQuery), not in a separate silo.

- Feature flags and experimentation: Eppo supports safe rollouts, kill switches, flag gating, and experiment logic in the same system.

- Contextual bandits and intelligent optimization: Beyond fixed splits, Eppo supports more adaptive experiment methods.

Eppo’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Warehouse-native experimentation architecture ● Simple SDK interface across multiple languages ● Clear experiment reporting interface ● High statistical rigor with advanced experimentation models |

● Pricing requires sales engagement ● Requires a mature data infrastructure ● Limited visual testing tools ● Setup may require data engineering involvement |

|---|

Why Eppo is a Fit for Developers

Developers benefit from Eppo because it keeps experiment logic close to data and stays transparent. You don’t have to stitch metrics out of two systems, as metric definitions live where your data lives. The SDKs are simple and consistent, reducing cognitive overhead. Local evaluation means low latency and fewer dependencies on external calls.

Why Do Companies Use Eppo?

Engineering-driven companies adopt Eppo to consolidate experimentation logic and analysis under a single umbrella.

It is especially appealing when teams already use a warehouse-oriented data stack and want experiments to plug into that rather than drive parallel systems. Eppo’s architecture suits companies scaling many experiments with precise metric alignment.

What Users Say About Eppo

Positive:

Eppo has an excellent interface for showcasing experiment progress and results. Visually clean and easy to understand.

Also love their plug-and-play integration with Snowflake. We finished POC in 2 weeks!

They also have one of the best support teams. Always ready to answer questions and educate various team members from experimentation basics to advanced statistical methods.

Their tool supports companies just starting out with experimentation as well as more advanced methodologies.

Source: G2

Critical:

I wish that it were easier to group experiment results by certain user properties and/or product types.

Source: G2

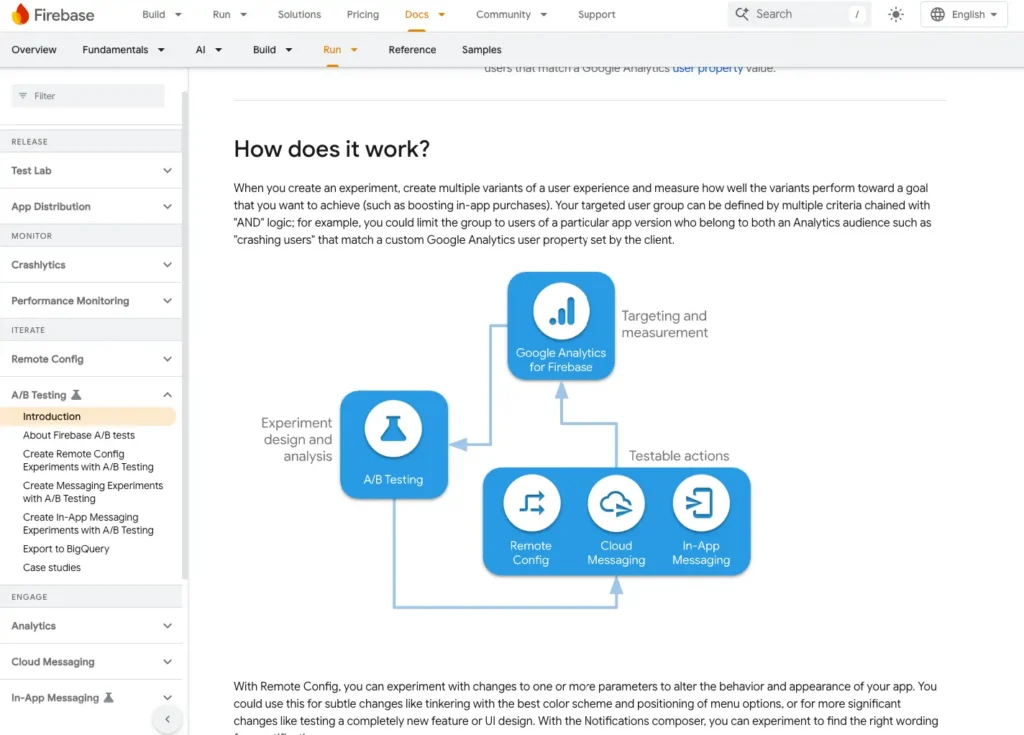

13. Firebase A/B Testing

Who this tool is for: Mobile product teams building on Firebase who want lightweight experimentation through Remote Config and Firebase Analytics.

Pricing: Starts free. After that, it’s usage-based pricing.

What is Firebase A/B Testing?

Firebase A/B Testing is Google’s built-in experimentation tool for mobile apps (iOS, Android) and in-app messaging. It uses Firebase Remote Config and Firebase Analytics to run experiments, monitor results, and roll out feature configurations to subsets of users.

What are Firebase A/B Testing’s Top Features?

- Remote Config parameter experimentation: You can change app behavior via config parameters and test different values in an experiment.

- Integration with analytics: You can choose Firebase Analytics events or funnels as experiment goals (e.g., retention, conversions) to assess variant performance.

- Targeting groups and audiences: You can limit experiments to specific audiences, like app version, platform, user properties, or Analytics audiences.

- Safe rollouts: Start experiments with a small subset of users, then expand exposure or roll out winning variants.

- Notifications and messaging experiments support: In addition to Remote Config, you can experiment on notification content via Firebase A/B Testing.

Firebase A/B Testing’s Pros and Cons

| Pros ✅ | Cons ❌ |

● Native experimentation for mobile apps using Firebase ● Tight integration with Firebase Analytics and Remote Config ● Easy rollout control for app features ● Free tier makes it accessible for early-stage teams |

● Experimentation reporting is basic ● Harder to run complex multi-platform experiments ● Primarily focused on mobile apps |

|---|

Why Firebase A/B Testing is a Fit for Developers

If your app already uses Firebase (Analytics, Remote Config), Firebase A/B Testing is a minimal-add overhead option. You don’t need to onboard a full experimentation stack. Configuration, rollout, tracking, and measurement can all be done within the Firebase ecosystem. This makes it especially useful for mobile devs who want lean experimentation.

Why Do Companies Use Firebase A/B Testing?

App teams use Firebase A/B Testing when they want basic experimentation without integrating a third-party tool.

For many use cases (UI tweaks, message experiments, and feature toggles), Firebase provides a lightweight option. It’s especially useful for early-stage apps or features where bringing in a heavier experimentation stack is not warranted.

What Users Say About Firebase A/B Testing

Reviews of this tool aren’t available.

How to Choose the Right A/B Testing Tool as a Developer

From speaking to other developers who use A/B testing tools, here’s what we’ve learned is the right way to go about choosing your A/B testing software.

Start with Workflow Fit

The first question is simple: Does this tool live naturally in your workflow, or does it force you into a clunky UI?

If you’re working in Git and CI/CD every day, you’ll want experiments-as-code. That means versionable configs, environment parity (dev/stage/prod), and a clear audit trail. Tools like GrowthBook and Eppo were built with this in mind.

If your team has non-technical teammates who need to get involved, a hybrid approach works better. Convert and VWO FullStack give you SDKs and APIs for the heavy lifting, while still offering a UI for marketers or product managers who just need to ship a quick headline test.

Prioritize SDKs and API Coverage

One of the fastest ways to lose a developer’s trust is to say, “just paste this snippet.” That might work for a basic landing page test, but serious experimentation needs real SDKs and APIs.

Optimizely, LaunchDarkly, and ABsmartly stand out here with wide SDK coverage across Node.js, Python, Java, Go, Swift/Kotlin, and React/Vue frameworks. Mobile-focused teams often gravitate toward Firebase A/B Testing, which ties directly into Remote Config and Firebase Analytics.

Performance Isn’t Optional

Performance comes up again and again in developer circles. If a tool slows down your site, causes flicker, or tanks Core Web Vitals, it’s a non-starter.

That’s why platforms like Convert (which uses edge-assembled scripts and first-party APIs) get respect from developers. They let you run experiments without adding bloat or introducing debugging nightmares.

Match the Tool to Your Stage of Growth

The “right” tool often depends on your company’s size and complexity:

- Startups and small teams: Go for free tiers and open-source control. GrowthBook and PostHog give you experimentation without contracts or overhead.

- Growth-stage SaaS or ecommerce: You’ll want balance. Tools like VWO FullStack, Statsig, and Convert give non-dev teammates enough autonomy while keeping the code-level flexibility developers need.

- Enterprise or regulated industries: At this scale, stability, privacy, and compliance are non-negotiable. Optimizely Full Stack, Convert, and Adobe Target offer mature APIs, enterprise support, and the infrastructure to handle large-scale, complex tests.

Don’t Overlook Debuggability

It’s easy to underestimate this one until you’ve lost a week to “why didn’t this variation fire?”

Other developers consistently flag debuggability as a must-have. Logs, QA tokens, debug overlays, and clear error reporting. A good tool doesn’t just run the test, it tells you why something broke so you can fix it fast.

Learn More: QA-ing Client-Side & Server-Side Experiments

Wrapping Up

Your choice of developer-friendly A/B testing tool should hinge on team size, experiment volume, and how tightly you want experimentation tied into your workflows.

Pick a platform that minimizes integration overhead so you can stay focused on writing reliable code, shipping experiments quickly, and scaling learnings without burning cycles debugging SDKs or chasing data mismatches.

Frequently Asked Questions

What is the best A/B testing tool for developers with an API?

There’s no one “best” tool universally. It depends on your stack, budget, and maturity. But the ones developers often favor are those with fully featured SDKs (Node, Java, Python, and mobile SDKs), strong APIs for managing experiments programmatically, and support for experiment-as-code workflows (config files in Git, CI/CD integration).

Tools like Convert, LaunchDarkly, GrowthBook, Optimizely Full Stack, and Statsig are often cited in developer communities for having mature, stable APIs.

Convert’s REST API v2 supports full experiment management (projects, audiences, goals, and reports) with HMAC-signed authentication for security, while Optimizely offers extensive endpoints for enterprise workflows. Both provide programmatic control that fits CI/CD pipelines.

Which platforms have SDKs for Node.js, Python, or Java?

Many modern experimentation platforms support multiple SDKs. For example:

- LaunchDarkly and Optimizely offer broad SDK coverage across Node.js, Java, Python, Go, mobile (iOS/Android), etc.

- GrowthBook supports SDKs and client libraries across several languages and frameworks, with open-source versions you can host if needed.

- Convert supports full-stack experimentation via its JavaScript SDK and APIs, giving engineers flexibility and control to integrate experiments natively within their stack.

- Firebase is a strong option for mobile-first teams using Remote Config.

Convert’s edge-assembled scripts and SDKs ensure experimentation never compromises speed, compliance, or maintainability.

If your stack is niche, always verify the vendor’s SDK support and version maturity for your desired language.

Are there open-source A/B testing tools for developers?

Yes. Several open-source tools provide core experimentation capabilities that you can self-host or extend. Tools like GrowthBook (open source edition) and PostHog (self-hosted experimentation + analytics) are popular. The tradeoff is that you often must manage hosting, scaling, integrations, and support yourself.

How do developers integrate A/B testing into CI/CD pipelines?

The best practice is to treat experiments like code: you store experiment configurations or flags in version control, promote them through staging to prod environments, and have automated validations (linting and schema checks).

Many platforms support deploying changes via APIs or CLI tools, so your testing logic is part of your build. Also use feature flag gates (rollouts) to safely ramp traffic and rollback if performance or errors spike.

What’s the difference between feature flagging and A/B testing for developers?

Feature flags act as on/off switches or rollout controls. They enable or disable functionality dynamically.

On the other hand, A/B testing goes further. It randomly assigns users to different variations and collects metrics to compare outcomes.

Good experimentation platforms for developers let you have both, so you can use feature flags to gate changes, but run variation logic (and telemetry) through experimentation layers so you can measure impact, iterate, and validate decisions securely.

Which tools are best for mobile app developers?

Firebase A/B Testing is built into Google’s Firebase ecosystem and works natively with iOS/Android apps via Remote Config. For more flexibility or cross-platform testing, tools like LaunchDarkly, Statsig, and GrowthBook also offer mobile SDKs (iOS/Android) and remote variation control.

Convert’s SDK can power backend-driven experiments for mobile experiences, offering flexible integration options for native apps.

How do developers run A/B tests without hurting site performance?

Proxy-based delivery and edge-assembled scripts (like Convert’s) are respected by developers because they balance UX consistency with Core Web Vitals optimization.

Look for platforms with lean scripts, async loading, and CDN-based delivery to preserve Core Web Vitals.

Do developer-focused tools support GDPR and privacy compliance?

Yes, but depth varies. Convert (SOC2, HIPAA, and ISO 27001) emphasizes privacy-first APIs, including BYOID and consent-mode support, and does not send PII by default. GrowthBook and PostHog offer self-hosting for full data control. Enterprise tools like Optimizely and Adobe Target provide compliance certifications (SOC2 and HIPAA). Always confirm whether the SDKs and APIs respect consent mode and identity requirements.

Written By

Uwemedimo Usa

Edited By

Carmen Apostu