Testing Mind Map Series: How to Think Like a CRO Pro (Part 15)

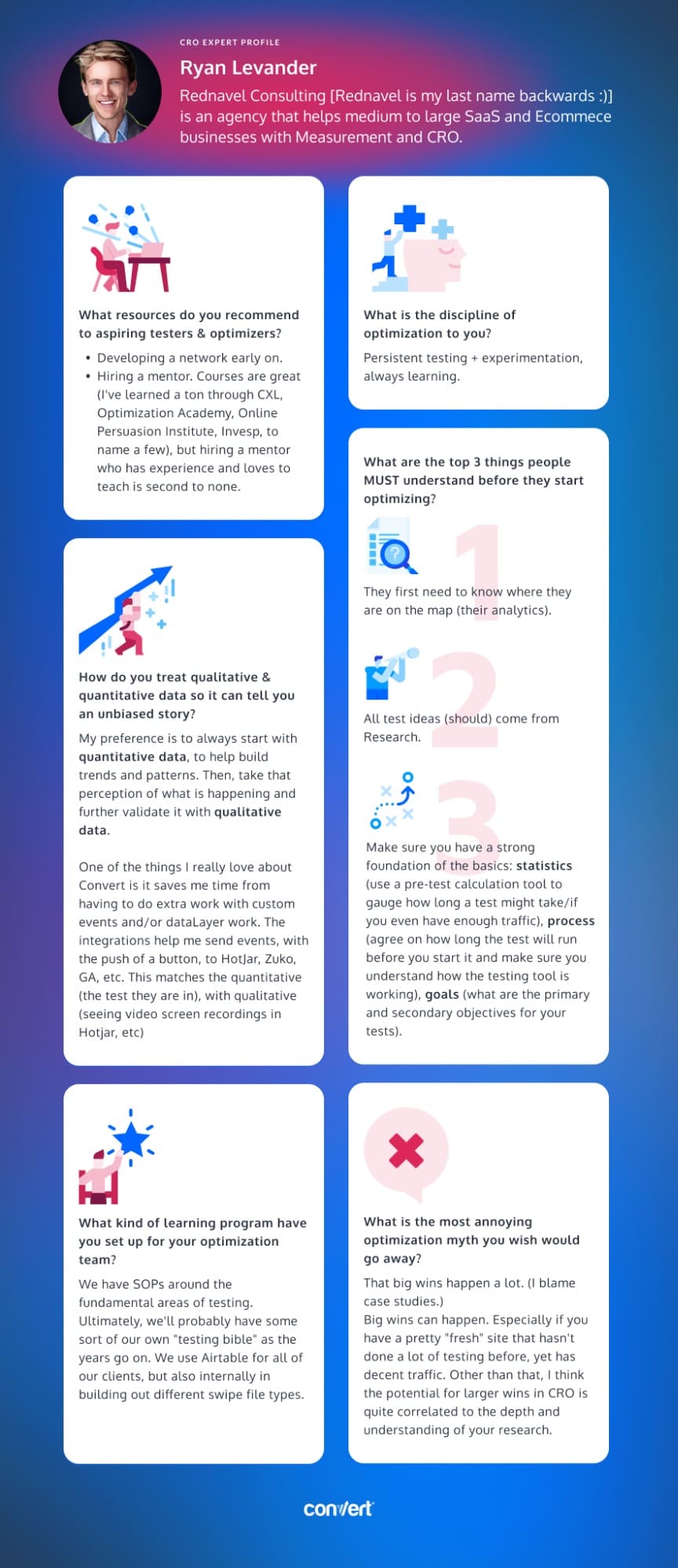

Interview with Ryan Levander

Today we have a one-of-a-kind interview for you with Ryan Levander of Rednavel Consulting. A customer of Convert, Ryan sheds light on what it takes to run successful tests.

Ryan says that one of his favorite things about CRO is the opportunity to gain customer behavior insights through research. From analytics and surveys to user testing and video recordings, there are so many different methods available. The key, he says, is to not limit yourself to just one method to build a hypothesis for a test idea.

So if you’re thinking about becoming a CRO pro, be prepared to do your research!

You’re about to embark on a journey that will change the way you think about CRO. Read on to get a feel for what the world of experimentation actually looks like in the real world (Hint: big wins don’t actually happen all that often!).

Ryan, tell us about yourself. What inspired you to get into testing & optimization?

Getting into testing and optimization really came down to listening to my audience. At the time (around 2015), I had made a transition from web development into video production.

Clients I was working with really loved the videos, but still really had questions about how they were getting/not getting the results from the increased awareness.

Naturally, I wanted to help my clients solve their biggest pain points and it bothered me I didn’t have those answers. I also saw a gap in the market for Marketers who could measure websites in a predictable way.

Learning Measurement to a deep level was a natural first step in getting into CRO. I wasn’t going to stop at just the numbers 🙂

How many years have you been optimizing for? What’s the one resource you recommend to aspiring testers & optimizers?

I have been measuring and optimizing for 6 years now. I initially started out more with analytics projects that would end up in reporting/dashboard setups. CRO has been one of the main focuses for me as a Consultant over the last 3 years.

A resource you should rely on is developing a network early on. I ended up finding some solid people from the Measure Slack community to form a small mastermind with. Most of those people I communicate with still today and bounce ideas off of.

One more is hiring a mentor. I waited too long to do this. Courses are great (I’ve learned a ton through CXL, Optimization Academy, Online Persuasion Institute, Invesp, to name a few), but hiring a mentor who has experience and loves to teach is second to none.

Answer in 5 words or less: What is the discipline of optimization to you?

Persistent testing + experimentation, always learning.

What are the top 3 things people MUST understand before they start optimizing?

They first need to know where they are on the map (their analytics), so to speak. If you want to reach 100 sales next month, we have to know how many we are getting this month. And not just the number, the how (get detailed with your analytics to understand the behaviors that your users are taking on your site).

All test ideas (should) come from Research. There are so many different types of research to be done, that’s probably one of the parts I like the most about CRO. Analytics, surveys, jobs to be done style interviews, user testing, on site polls, video recordings, joining sales calls/talking with the sales team who talk to customers all day (if relevant, we work in SaaS too), etc. are all different types of research to help build a hypothesis for a test idea. Don’t just use one research method!

Make sure you have a strong foundation of the basics.

Statistics – use a pre-test calculation tool to gauge how long a test might take/if you even have enough traffic.

Process – agree on how long, at minimum, the test will run before you start it. Also, make sure you understand how the testing tool you have chosen is working – decide on a % confidence level you are ok with.

Goals/micro-goals – What are the primary and secondary objectives here? When running tests further away from the sale/sign-up/lead, it’s generally a good idea to set up more goals to make sure your data tells a better “story” of what is happening (hint: Do this for navigation tests).

How do you treat qualitative & quantitative data so it tells an unbiased story?

My preference is to always start with quantitative. You will have more of it to help build trends and patterns that tell a useful truth of what’s going on. Then you can take that perception of what is happening and further validate it with qualitative data – dig into more of the “how” people are/aren’t converting.

One of the things I do really love about Convert is it saves me time from having to do extra work with custom events and/or dataLayer work. The integrations help me send events, with the push of a button, to HotJar, Zuko, GA, etc. This matches the quantitative (the test they are in), with qualitative (seeing video screen recordings and Heatmaps in Hotjar, for example).

What kind of learning program have you set up for your optimization team? And why did you take this specific approach?

We have SOPs around the fundamental areas of testing. Ultimately, we’ll probably have some sort of our own “testing bible” as the years go on. I currently use tools like Roam Research and Obsidian to connect thoughts together on articles I read in the space, then share them with the team after I’ve developed my own thoughts and arguments around a certain topic.

We use Airtable for all of our clients, but also internally in building out different swipe file types.

Learning has always been one of the most important things for me and in turn, my small team. Naturally, I want team members who have the right character qualities, like the hunger for knowledge and to be better. That is the same attitude one should have for testing – a “continuous improvement” type mindset.

What is the most annoying optimization myth you wish would go away?

That big wins happen a lot. (I blame case studies)

Big wins can/do happen. I’d say if you have a pretty “fresh” site that hasn’t done a lot of testing before, yet has decent traffic (and you’re a fairly experienced CRO familiar with the industry), then that’s a good chance for a larger win early.

Other than that, I think the potential for larger wins in CRO is quite correlated to the depth and understanding of your research. I have found that CROs can become partial to one type of research (we’re probably all guilty of this based on what one we’re better at) when multiple inputs really are key.

I like to use the jelly beans in the jar example – if you are the only one guessing how many beans are in the jar, there is a chance you could be off by a lot. If you have a group of people guessing how many beans are in the jar, chances are less likely to be off when taking the average of all the guesses. The more research types and inputs you have, the better likelihood you are going to land a test winner.

Sometimes, finding the right test to run next can feel like a difficult task. Download the infographic above to use when inspiration becomes hard to find!

Hopefully, our interview with Ryan will help guide your experimentation strategy in the right direction!

What advice resonated most with you?

Be sure to stay tuned for our next interview with a CRO expert who takes us through even more advanced strategies! And if you haven’t already, check out our interviews with Gursimran Gujral, Haley Carpenter, Rishi Rawat, Sina Fak, Eden Bidani, Jakub Linowski, Shiva Manjunath, Andra Baragan, Rich Page, Ruben de Boer, Abi Hough and our latest with Alex Birkett.