Testing Mind Map Series: How to Think Like a CRO Pro (Part 12)

Interview with Abi Hough

This interview with Abi is one to bookmark.

Having spent 20 years in optimization, she offers in-depth insights into how it should be done scientifically, without bias or prejudice towards any particular idea.

She explains the importance of having good quality data collection methods as well as an understanding of exactly what everyone on the team does. She offers advice on how to break bad habits and not think of everything that is new as something to optimize. The focus should be on optimizing the user interface, the user journey, and the user experience.

You’ll want to read this interview beginning to end if you care about making your website the best it can be.

Abi, tell us about yourself. What inspired you to get into testing & optimization?

My journey into testing and optimisation began in the early 2000s when I was a front-end developer / designer and I was horrified when I sat through my first usability lab session watching people trying to use what I’d built. How could they not see X, Y, Z? Why can’t they figure A, B or C out? What is wrong with these people!?

You know the saying, “it’s not you, it’s me”?

Well it was definitely me and this was the most devastating breakup I ever had to endure; the relationship between my own ego and understanding what people actually needed and wanted. It was this fundamental shift that piqued my interest in user research and the benefits and insights that it could bring.

Testing was a natural progression of that for proving, refuting or expanding on ideas scientifically and without bias, and this paved the way towards a more optimised way of working in favour of the user and the company.

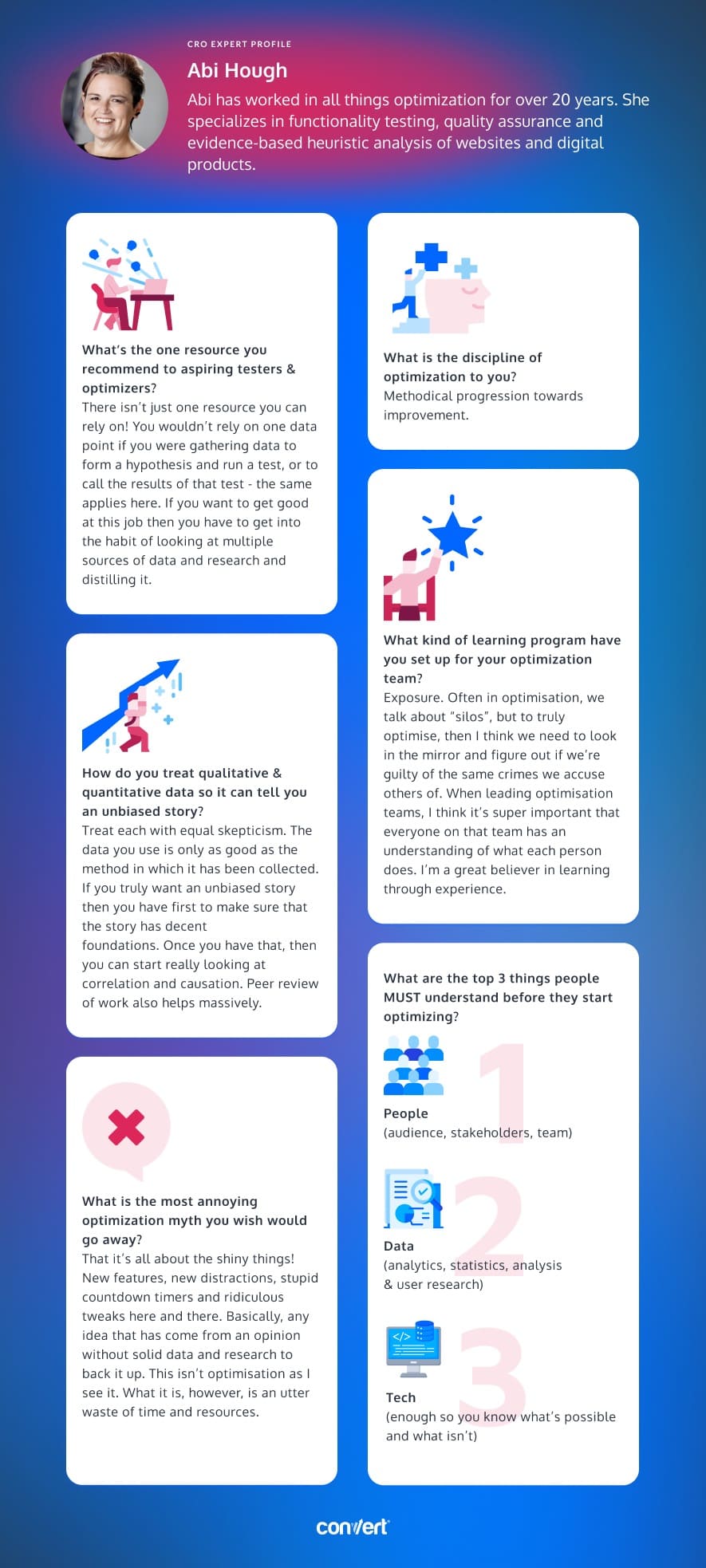

How many years have you been optimizing for? What’s the one resource you recommend to aspiring testers & optimizers?

Trick question?

There isn’t one resource you can rely on!

You wouldn’t rely on one data point if you were gathering data to form a hypothesis and run a test, or to call the results of that test – the same applies here.

If you want to get good at this job then you have to understand and get into the habit of looking at multiple sources of data and research and distilling it. In the 20+ years I’ve been optimising, this is the best bit of advice I can give anyone 🙂 So, no. I’m not going to name-drop one thing in particular, other than tipping the hat to everyone’s internal sense of curiosity, research and analysis skills.

Answer in 5 words or less: What is the discipline of optimization to you?

Methodical progression towards improvement.

What are the top 3 things people MUST understand before they start optimizing?

- People (audience, stakeholders, team)

- Data (analytics, statistics, analysis & user research)

- Tech (enough so you know what’s possible and what isn’t)

How do you treat qualitative & quantitative data so it tells an unbiased story?

Treat each with equal skepticism.

The data you use is only as good as the method in which it has been collected. Evaluate if the data you have, whether it qual or quant has been gathered in a fair and accurate way.

Countless times I have been presented with data only to find that once you start digging into it that it’s flawed in one way or another. Dodgy analytics setups, biased survey questions, the list goes on.

So if you truly want an unbiased story then you have first to make sure that the story has decent foundations.

Once you have that, then you can start really looking at correlation and causation.

Peer review of work also helps massively. Think of it this way, you wouldn’t give any kudos to a groundbreaking medical discovery had the research not been critiqued by other specialists in that particular discipline. The same applies when it comes to optimisation and testing. If optimisers wish to portray a scientific approach to what they do, then they need to make sure it’s given more than just lip service.

What kind of learning program have you set up for your optimization team? And why did you take this specific approach?

Exposure. Often in optimisation, we talk about “silos”, but to truly optimise, then I think we need to look in the mirror and figure out if we’re guilty of the same crimes we accuse others of.

When leading optimisation teams, I think it’s super important that everyone on that team has an understanding of what each other person does and how they do it. I’m not asking that a UI designer suddenly is thrown into the depths of JavaScript coding, or that an analytics guru suddenly gets Figma or Sketch dumped in front of them and asked to prototype a checkout process. What I do want though is an appreciation of each other’s work, how it’s approached and a fundamental understanding of it for every member of that team so they know what’s involved.

Gaining this basic knowledge stack is invaluable and actually can lead to insights as to how to optimise the optimisers.

I’m a great believer in learning through experience. It’s a great teacher… like the time I made everyone on my team use their monitors at the most common resolution that desktop visitors viewed a particular website on that was being optimised. That sparked some debate, a lot of frustrations and some great ideas for improving the user experience! Sometimes you need to “feel” what it’s like, not just interpret data and read words about it.

What is the most annoying optimization myth you wish would go away?

That it’s all about the shiny things! New features, new distractions, stupid countdown timers and ridiculous tweaks here and there. Basically, any idea that has come from an opinion without solid data and research to back it up.

This isn’t optimisation as I see it. What it is, however, is an utter waste of time and resources.

Some of the best optimisation I have done involves improvements to the UI, user journey and user experience. If you want to see optimisation with longevity, then this is where to start.

Sometimes, finding the right test to run next can feel like a difficult task. Download the infographic above to use when inspiration becomes hard to find!

Hopefully, our interview with Abi will help guide your experimentation strategy in the right direction!

What advice resonated most with you?

Be sure to stay tuned for our next interview with a CRO expert who takes us through even more advanced strategies! And if you haven’t already, check out our interviews with Gursimran Gujral, Haley Carpenter, Rishi Rawat, Sina Fak, Eden Bidani, Jakub Linowski, Shiva Manjunath, Andra Baragan, Rich Page, and our latest with Ruben de Boer.